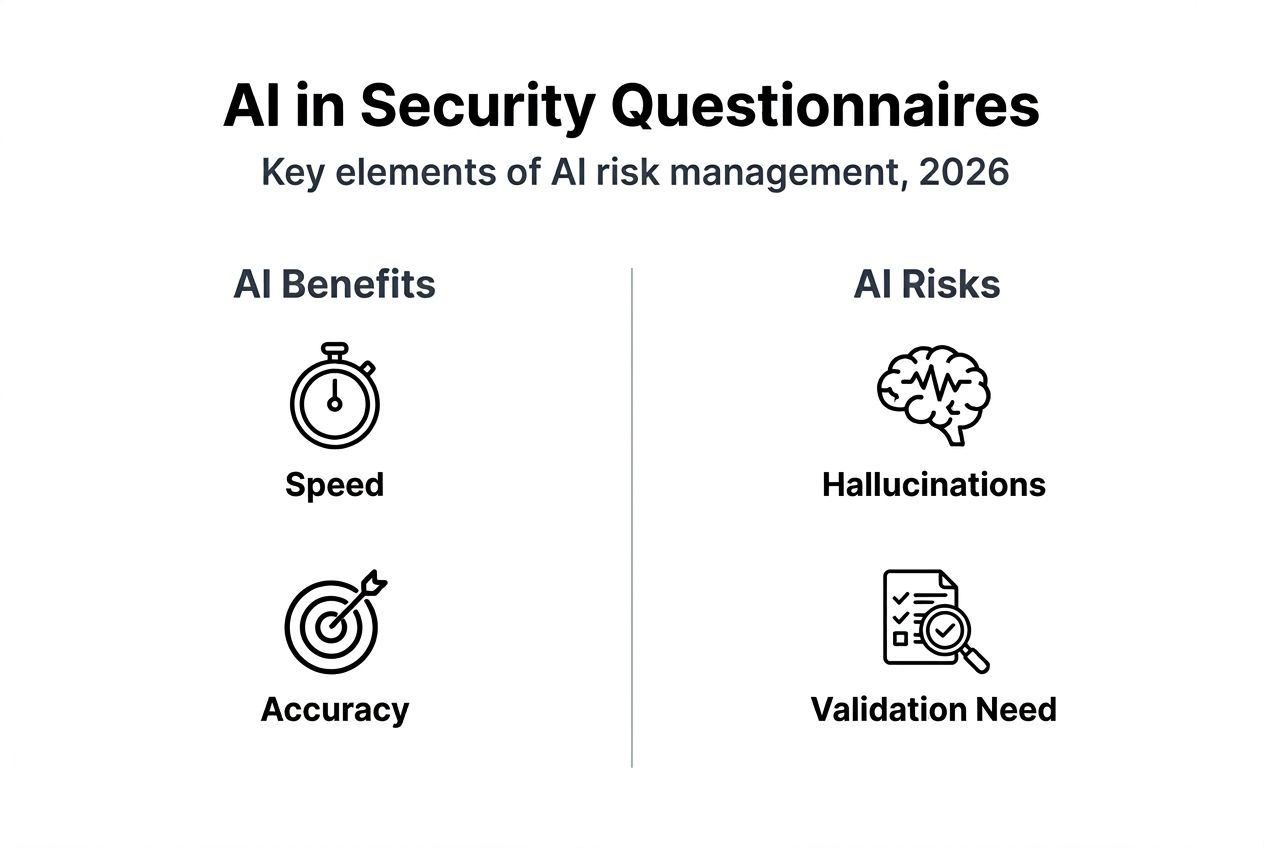

Many risk professionals believe AI can completely automate security questionnaires, but this misconception overlooks a critical reality: human expertise remains essential. While AI dramatically accelerates questionnaire processing and improves compliance rates, it cannot replace the judgment and validation that experienced analysts provide. This article explores how AI enhances risk management in security questionnaires without replacing human oversight, examining proven frameworks, real enterprise case studies, critical risks like hallucinations and prompt injection, and actionable best practices for combining AI efficiency with expert validation.

Table of Contents

- Understanding AI's Integration In Risk Management And Security Questionnaires

- Common Risks And Challenges Of AI In Risk Management

- Enterprise AI Orchestration: Lessons From JPMorgan And Industry Trends

- Best Practices For Implementing AI In Security Questionnaire Risk Management

- Enhance Your Security Questionnaire Process With Skypher's AI Tools

Key takeaways

| Point | Details |

|---|---|

| AI boosts efficiency but requires oversight | AI reduces questionnaire processing time by up to 70% while improving compliance by 15-20%, yet human validation remains critical for accuracy. |

| FS AI RMF provides governance framework | The Financial Services AI Risk Management Framework introduces 230 control objectives spanning governance, data quality, model validation, and consumer protection. |

| Prompt injection and hallucinations pose risks | AI systems face prompt injection vulnerabilities and generate fabricated outputs that require dedicated mitigation strategies. |

| Enterprise leaders demonstrate scalable adoption | JPMorgan's $2 billion AI investment has already paid for itself through cost reduction and process optimization using orchestration platforms. |

| Hybrid approach delivers optimal outcomes | Combining AI automation with expert validation ensures compliance, reduces errors, and maximizes efficiency in security questionnaire workflows. |

Understanding AI's integration in risk management and security questionnaires

AI has transformed how organizations handle security questionnaires, particularly in tech and finance sectors where compliance demands continue growing. The Financial Services AI Risk Management Framework introduces 230 control objectives across governance, data management, model development, validation, monitoring, third-party risk, and consumer protection, providing institutions with an operational architecture standard for AI governance.

Research shows that AI automation reduces questionnaire completion time by 70% while improving compliance rates by 15-20%. These gains emerge from AI's ability to parse documents rapidly, extract relevant information, and generate draft responses based on organizational knowledge bases. Yet these tools augment rather than replace human decision making.

The benefits of integrating cyber security artificial intelligence into risk management workflows include:

- Faster document review and information extraction from policy libraries

- Improved compliance through consistent application of regulatory requirements

- Reduced human error in repetitive questionnaire sections

- Enhanced accuracy through pattern recognition across historical responses

- Scalable processing capacity handling multiple concurrent questionnaires

The FS AI RMF serves as more than guidance. It functions as an operational standard helping institutions build governance structures before regulatory mandates arrive. Organizations that adopt these frameworks proactively position themselves to meet emerging compliance requirements while capturing efficiency gains that justify AI investments.

Pro Tip: Start with AI automation for standardized questionnaire sections like infrastructure descriptions and compliance certifications, then reserve human review for complex risk assessments requiring contextual judgment.

Common risks and challenges of AI in risk management

AI systems introduce specific vulnerabilities that risk professionals must understand and mitigate. Prompt injection attacks enable malicious actors to manipulate AI agents into executing unintended actions like data exfiltration or creating self-replicating AI worms. These attacks exploit the way language models process instructions, making traditional security controls insufficient.

Hallucinations represent another critical risk, where AI systems generate factually incorrect or completely fabricated statements with high confidence. In security questionnaires, hallucinations could lead organizations to claim compliance with standards they don't actually meet or misrepresent their security controls. The probabilistic nature of large language models means they optimize for plausible-sounding responses rather than verified accuracy.

Bias detection and mitigation present ongoing challenges. AI tools trained on historical questionnaire responses may perpetuate outdated practices or reflect organizational blind spots. Toolkits like IBM's AI Fairness 360 help identify bias patterns, but they require active implementation and monitoring to be effective.

Key AI-specific risks include:

- Prompt manipulation bypassing security controls and data governance policies

- Fabricated compliance claims that create legal and regulatory exposure

- Inherited biases from training data leading to inconsistent risk assessments

- Over-reliance on AI outputs without proper validation processes

- Data privacy breaches through unintended information disclosure

These risks require human validation at critical decision points. Automated compliance security reviews work best when AI handles initial processing while experts verify outputs before submission. The goal is leveraging AI's speed and pattern recognition while maintaining human judgment as the final authority.

Pro Tip: Implement a validation checklist specifically for AI-generated questionnaire responses, focusing on factual accuracy of compliance claims, consistency with documented policies, and appropriateness of risk characterizations.

Enterprise AI orchestration: Lessons from JPMorgan and industry trends

JPMorgan Chase demonstrates how large organizations implement AI at scale while managing associated risks. The bank's $2 billion AI investment has already paid for itself through cost reduction and process optimization. Central to this success is OmniAI, a proprietary orchestration platform that creates, manages, and safely deploys hundreds of AI tools with model agnosticism and embedded compliance rules.

OmniAI's architecture addresses common enterprise AI challenges. Model agnosticism prevents vendor lock-in by enabling seamless switching between AI providers as capabilities and costs evolve. Embedded compliance rules operate at the platform level, ensuring every AI application adheres to regulatory requirements without relying on manual oversight. This governance-by-code approach scales more reliably than checklist-based processes.

Best practices for building enterprise AI platforms:

- Develop proprietary orchestration layers rather than depending entirely on vendor platforms

- Embed compliance and governance rules into system architecture from day one

- Enable model swapping to maintain flexibility as AI capabilities advance

- Implement governance through automated code enforcement rather than manual processes

- Establish clear data lineage and audit trails for regulatory examinations

| Criteria | Traditional Risk Management | AI-Augmented Orchestration |

|---|---|---|

| Processing Speed | Days to weeks per questionnaire | Hours to complete 200+ questions |

| Compliance Consistency | Varies by analyst expertise | Standardized rule application |

| Scalability | Linear with headcount | Exponential with platform capacity |

| Vendor Flexibility | High switching costs | Model-agnostic architecture |

| Audit Trail | Manual documentation | Automated logging and lineage |

The security questionnaire challenges AI addresses stem from complexity rather than technology limitations. Organizations that succeed build platforms treating AI as infrastructure requiring the same governance, monitoring, and controls as other critical systems.

Pro Tip: Code your organization's risk tolerance and compliance requirements directly into your AI orchestration platform so every automated output inherently reflects your governance standards without manual verification.

Best practices for implementing AI in security questionnaire risk management

Despite growing adoption, significant gaps persist in how organizations validate and govern AI systems. While 68% of financial firms prioritize AI in risk and compliance, 38% lack proper validation of AI output quality. Perhaps more concerning, more than 64% have not responded to SEC AI-related examination sweeps, revealing a disconnect between adoption enthusiasm and regulatory preparedness.

Essential best practices for AI implementation:

- Validate every AI-generated response against source documentation before submission

- Maintain human-in-the-loop review for responses involving risk assessments or compliance claims

- Conduct thorough vendor due diligence focusing on data handling, model training, and audit capabilities

- Monitor AI system updates and retrain models when organizational policies or controls change

- Ensure adherence to emerging regulatory guidance on AI governance and transparency

| Approach | Firms with Mature AI Risk Frameworks | Firms Lacking Formal Frameworks |

|---|---|---|

| Validation Process | Systematic output verification against policies | Ad hoc or missing validation |

| Compliance Impact | Consistent regulatory alignment | Exposure to examination findings |

| Operational Efficiency | 60-70% time savings with controlled risk | Limited gains due to manual rework |

| Regulatory Readiness | Documented governance and audit trails | Unprepared for examinations |

| Risk Exposure | Managed through systematic controls | Elevated due to undetected errors |

The information security tool benefits you realize depend on implementation quality. Organizations that automate security questionnaires faster while maintaining accuracy invest in validation infrastructure from the start. This includes establishing trusted data sources, implementing regular testing protocols, and training teams to identify AI-specific failure modes.

Pro Tip: Create a dedicated testing environment where you can validate AI responses against known correct answers before deploying the system for live questionnaires, building confidence in output quality over time.

Enhance your security questionnaire process with Skypher's AI tools

Implementing the best practices discussed requires platforms purpose-built for security questionnaire automation. Skypher's security questionnaires automation platform combines AI efficiency with the validation controls risk professionals need. Our proprietary AI models deliver up to 70% time savings while maintaining accuracy through intelligent recommendations tailored to your organization's specific security posture and compliance requirements.

Skypher's AI powered recommendation engine learns from your historical responses and policy documentation, generating contextually appropriate draft answers that your team can review and approve. The platform supports complex enterprise setups with multiple products and entities, integrates with over 30 third-party risk management portals, and provides the audit trails necessary for regulatory examinations. By combining AI automation with expert validation workflows, you optimize both speed and accuracy in your security questionnaire processes.

FAQ

What challenges does AI face in fully automating security questionnaires?

AI tools streamline security questionnaire workflows but cannot fully automate security questionnaires faster without human oversight due to risks like hallucinations, bias, and regulatory complexity. Probabilistic AI outputs require validation to ensure factual accuracy and compliance alignment. Human expertise remains essential for contextual judgment on complex risk assessments.

How can organizations mitigate AI risks in risk management workflows?

Organizations should implement systematic validation processes, select vendors with strong governance frameworks, continuously monitor AI outputs for accuracy, and maintain human experts in review loops. Adopting structured frameworks like the FS AI RMF provides governance architecture. Leveraging information security tool benefits requires balancing automation with control.

What are key considerations when selecting AI tools for security questionnaires?

Evaluate each vendor's data quality, compliance framework integration, and customization capabilities to match your organizational needs. Ensure the platform supports human review workflows and integrates seamlessly with your existing systems. Look for security questionnaire AI tools offering audit trails, version control, and model transparency to meet regulatory requirements while delivering efficiency gains.