TL;DR:

- AI-driven security questionnaires significantly reduce response time and manual effort.

- These platforms improve response accuracy and provide detailed audit trails for compliance.

- A hybrid approach with AI and human review optimizes efficiency and risk management.

Security teams at mid-to-large tech and finance firms know the pain: a single vendor questionnaire can consume 12 hours of SME time, and deal pipelines stall while compliance staff chase approvals. AI-driven tools cut this by 70-80%, turning a multi-day ordeal into a 2-to-3-hour workflow. But the market is noisy, and separating genuine capability from vendor hype takes real scrutiny. This guide breaks down exactly how AI-driven security questionnaires work, what measurable outcomes you can expect, how to pick and implement the right solution, and how to stay in control once automation is live.

Table of Contents

- What are AI-driven security questionnaires?

- Key benefits: Time, accuracy, and auditability

- Choosing and implementing the right solution

- Risks, realities, and how to stay in control

- The real-world challenge: Why balanced AI and human collaboration wins

- See AI-driven questionnaires in action

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Drastic time savings | AI-driven questionnaires typically cut completion time by over 70% with high accuracy and transparency. |

| Not all tools are equal | For regulated industries, choose vendors that offer auditability and workflow control—not just speed. |

| Collaboration is critical | Balancing automation with SME oversight prevents errors and safeguards your compliance process. |

| Manage risks proactively | Consistent validation, approval workflows, and transparency protect against AI mistakes and build trust. |

What are AI-driven security questionnaires?

A security questionnaire is a structured set of questions a prospective client, partner, or auditor sends your organization to assess your security posture. Traditionally, your team answered each question manually, pulling from scattered policy documents, prior responses, and tribal knowledge. The process was slow, inconsistent, and nearly impossible to scale when deal volume increased.

AI-driven security questionnaires change that model fundamentally. Instead of a static template library, these platforms use agentic AI, a type of AI that can reason, retrieve, and act across multiple steps without constant human prompting. The system reads an incoming questionnaire, searches a live knowledge base built from your approved policies and past responses, generates best-fit answers, and routes them for review. The whole cycle happens in minutes, not days.

Here is what distinguishes a genuinely AI-driven platform from a glorified copy-paste tool:

- Auto-response generation: The AI drafts answers in context, not just keyword matches.

- Knowledge base management: The system continuously updates its source material as your policies evolve.

- Portal integration: Direct connectors to third-party risk management (TPRM) portals like OneTrust and ServiceNow mean no manual re-entry.

- Approval workflows: Every AI-generated response routes through a configurable review chain before it leaves your organization.

- Audit trail: Every answer is traceable to its source document, giving compliance teams the evidence they need.

The accuracy benchmarks are no longer theoretical. Post-2023 AI platforms deliver 90-96% accuracy on standard security questionnaire formats, a level that rivals experienced human reviewers on routine questions. For teams managing dozens of concurrent deals, that accuracy at scale is what makes the technology genuinely transformative.

If you want a deeper look at what separates capable platforms from basic tools, the AI-driven essentials breakdown is worth reviewing before you evaluate vendors.

Key benefits: Time, accuracy, and auditability

The case for AI-driven questionnaires is not just about convenience. It is about measurable operational impact that shows up in your compliance metrics, your sales velocity, and your liability exposure.

Start with time. Mid-size firms save 150+ hours per month across 15 deals by switching to AI automation. That is not a rounding error. It represents multiple full-time equivalent weeks of SME capacity freed up for higher-value work. The automation time savings compound quickly when you factor in deal acceleration and reduced back-and-forth with prospects.

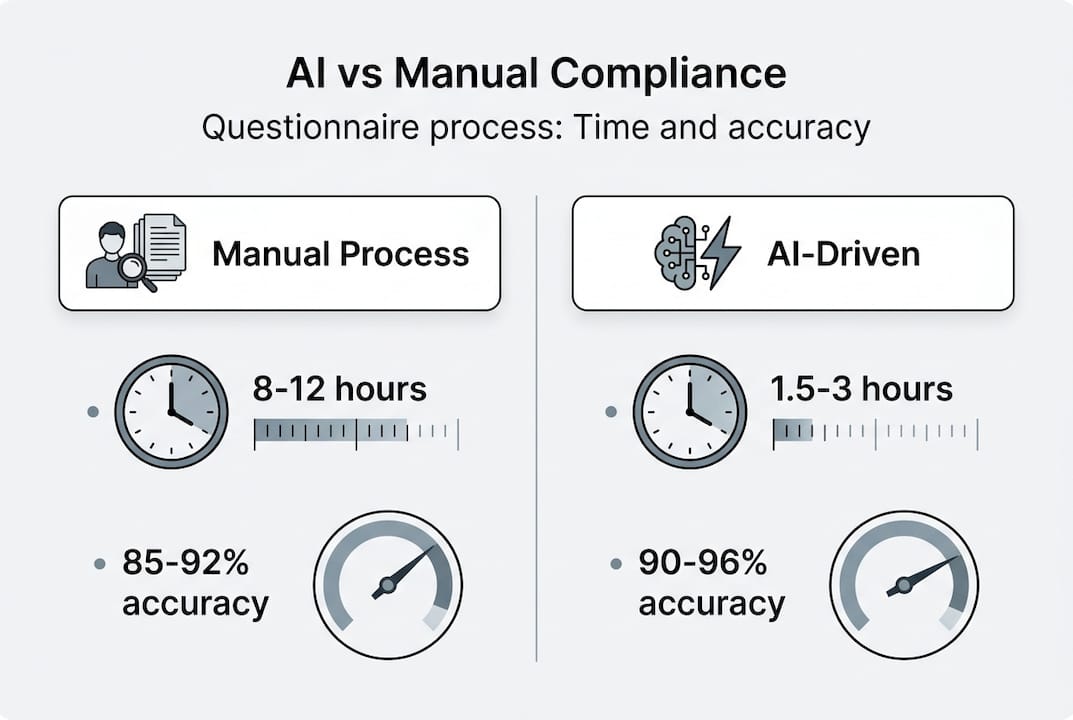

Here is how the numbers compare in practice:

| Metric | Manual process | AI-driven process |

|---|---|---|

| Time per questionnaire | 8-12 hours | 1.5-3 hours |

| Error or inconsistency rate | 15-25% | 4-10% |

| Audit trail granularity | Low (email threads) | High (source-linked) |

| SME hours per month (15 deals) | 150+ hours | Under 30 hours |

| Scalability | Limited by headcount | Scales with volume |

Accuracy improvements are not just about speed. Inconsistent answers across questionnaires create compliance risk. When your AI platform pulls from a single, version-controlled knowledge base, every answer reflects your current approved posture. That consistency is what auditors and enterprise clients increasingly expect.

Auditability deserves special attention. In regulated industries, you need to prove not just what you answered, but why. Modern AI platforms generate reasoning traces, showing which source document justified each response. This matters enormously for incident response automation scenarios and for demonstrating due diligence to regulators.

"Agentic AI enables not just speed, but compliance-grade transparency."

The AI advantages extend beyond your compliance team. Sales engineers spend less time on security reviews, deals close faster, and prospects receive more consistent, credible answers.

Pro Tip: Always run spot checks on AI-generated responses, especially for edge-case questions involving new product lines or recent policy changes. A 5-minute review of flagged low-confidence answers prevents the vast majority of errors from reaching clients.

Choosing and implementing the right solution

Knowing the benefits is one thing. Picking the right platform and getting it live without disrupting active deals is another challenge entirely.

Start with your non-negotiable criteria. Any platform worth evaluating must clear these bars:

- Accuracy at or above 90% on your actual questionnaire formats, not just vendor benchmarks.

- GRC integrations with the platforms you already use, such as Vanta, Drata, and OneTrust.

- Auto-maintenance of the knowledge base so your answers stay current without manual curation sprints.

- Full auditability with source citations and reasoning traces for every generated response.

- Configurable approval workflows that match your internal sign-off structure.

Prioritize vendors with strong transparency, reasoning traceability, and workflow flexibility, especially if you operate in regulated sectors like financial services or healthcare.

Here is a practical implementation sequence:

- Audit your knowledge base. Identify your most-used policy documents, past questionnaire responses, and compliance certifications. Clean and consolidate before you ingest anything into a new platform.

- Map your integrations. List every TPRM portal and internal tool your team touches. Confirm the vendor supports them natively or via API.

- Configure approval workflows. Define who reviews what, at which confidence threshold, and how escalations work. Do this before go-live, not after.

- Run a pilot on low-stakes deals. Use the first two to four weeks on questionnaires where a delayed response will not cost you a deal.

- Measure and calibrate. Track accuracy, turnaround time, and SME review hours weekly. Adjust confidence thresholds based on real data.

- Scale to full volume. Once your team trusts the outputs and your workflows are stable, open the platform to all active deals.

A recent robustness study on AI systems in regulated environments reinforces that phased rollouts with clear validation checkpoints consistently outperform big-bang deployments.

Pro Tip: Start with a pilot on non-critical deals. It builds team confidence, surfaces integration gaps early, and gives you real accuracy data to share with leadership before you commit to full deployment.

For more tactical guidance, the streamline responses tips and compliance transformation resources offer step-by-step frameworks that complement the evaluation process.

Risks, realities, and how to stay in control

No technology eliminates risk. AI-driven questionnaire platforms introduce specific failure modes that your team needs to understand and actively manage.

The most cited concern is hallucination: the AI generates a plausible-sounding answer that is factually incorrect or unsupported by your actual policies. Key risks include hallucinated responses, edge-case misfires, and citation gaps, all of which are mitigated by SME validation and robust approval workflows. The risk is real, but it is manageable with the right controls.

Here are the practices that actually work:

- Require source citations for every AI-generated answer. If the platform cannot show you which document it pulled from, that answer should not leave your organization.

- Set confidence thresholds. Responses below a defined confidence score route automatically to SME review rather than auto-submitting.

- Assign named reviewers. Diffuse accountability means no accountability. Every questionnaire should have a named owner who is responsible for final sign-off.

- Audit your knowledge base quarterly. Stale source documents are the root cause of most accuracy failures. Keep your inputs clean.

- Track error patterns. When the AI gets something wrong, log it and update the knowledge base. Over time, this feedback loop dramatically improves performance.

Finance sector teams face additional pressure here. Regulators and enterprise clients in financial services apply more scrutiny to AI-generated compliance outputs than their tech sector counterparts. That means your approval workflow needs more rigor, not less, and your audit trail needs to be airtight.

"The road to confident AI adoption is paved with transparent processes and cross-team validation."

Reviewing AI trust practices and pairing them with internal governance frameworks gives compliance officers a solid foundation for managing these risks without slowing down the efficiency gains. The overcoming challenges resource offers practical guidance on building those safeguards into your existing workflows.

The real-world challenge: Why balanced AI and human collaboration wins

Here is a perspective most vendors will not share with you: 100% automation is not the goal, and teams that chase it tend to create more liability than they eliminate.

The organizations seeing the strongest outcomes are not the ones who handed everything to the AI. They are the ones who built a disciplined hybrid process: AI handles the high-volume, well-documented questions at speed, while trained SMEs focus their attention on novel, high-stakes, or ambiguous items. Experts recommend an 80/20 AI-to-human workflow for optimal outcomes, and that ratio reflects hard-won operational reality.

The workflow structure matters as much as the technology. Teams that define a clear KNOW, REVIEW, APPROVE cycle before they go live avoid the headline-grabbing failures that make executives nervous about AI adoption. The rigor is not bureaucracy. It is what makes the speed sustainable.

Most organizations overestimate what the AI alone delivers and underestimate how much the human layer amplifies it. Build the automation best practices into your process from day one, and your team will trust the outputs enough to actually use them at scale.

See AI-driven questionnaires in action

If your team is still spending double-digit hours on individual questionnaires, the gap between where you are and where you could be is significant. Skypher's security questionnaires automation platform combines 90%+ accuracy, 40+ TPRM integrations, and real-time collaboration into a single workflow your compliance and sales teams will actually use.

From automated parsing of every major questionnaire format to automated review cycles that flag duplicates and route approvals intelligently, Skypher is built for the scale and rigor that mid-to-large organizations require. Run a pilot on your next questionnaire and see the time savings firsthand.

Frequently asked questions

How do AI-driven security questionnaires work?

AI-driven platforms use agentic AI and knowledge management to automatically match incoming questions to approved responses, generate drafts, and route them for SME sign-off before submission.

What's the main risk of using AI for security questionnaires?

Risks include hallucinations and workflow gaps, especially at edge cases. The most effective mitigation is combining confidence-threshold routing with named SME reviewers and mandatory source citations on every response.

How much time can AI automation actually save?

It cuts process time by 70-80%, turning a 12-hour questionnaire into roughly 2 to 3 hours, with further gains as your knowledge base matures and the AI learns your specific response patterns.

How can organizations measure the ROI of AI-driven questionnaires?

Track SME hours per questionnaire, deal cycle time, and monthly questionnaire volume. Firms save 150+ hours a month across 15 or more active deals, making the ROI calculation straightforward once you have two to three months of baseline data.

Recommended

- How AI transforms compliance for security questionnaires

- Overcome security questionnaire challenges with AI in 2026

- Compliance Admin: Streamline Security Questionnaire Workflow

- Cybersecurity questionnaire essentials: AI-driven in 2026

- Argonix | AI Ops Copilot - Monitoring, Incident Response & Infrastructure Automation