Over 77% of organizations experienced AI-related breaches in 2024, exposing critical vulnerabilities in vendor risk management and compliance workflows. Security questionnaires have become exponentially more complex, now featuring 80+ AI-specific questions aligned with frameworks like NIST AI RMF and OWASP LLM Top 10. For compliance and risk management professionals at medium to large tech and financial organizations, managing these assessments manually is no longer sustainable. AI-driven automation offers a transformative solution, reducing questionnaire completion time by up to 80% while maintaining regulatory accuracy and audit readiness. This article explores how AI reshapes compliance management, balances automation with human oversight, and navigates evolving regulatory demands.

Table of Contents

- Key takeaways

- Understanding AI risks in compliance management

- How AI enhances security questionnaire efficiency and accuracy

- Navigating regulatory frameworks with AI-driven assessments

- Best practices for governance and risk mitigation with AI in compliance

- Enhance your compliance process with Skypher's AI tools

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Questionnaire speed boost | AI driven automation can reduce security questionnaire completion time by up to 80 percent while maintaining regulatory accuracy. |

| Hybrid human automation | Hybrid AI human workflows balance automation with regulatory requirements to preserve control and auditability. |

| EU compliant guidance | EU AI Act compliance requires tailored risk assessments and transparency to satisfy regulators and stakeholders. |

| Governance and monitoring | Continuous governance and monitoring are essential to identify AI risks and prevent drift, bias, and incidents. |

| Expert review boosts accuracy | Benchmarking and expert review improve the reliability of AI driven compliance results. |

Understanding AI risks in compliance management

AI has revolutionized efficiency, but it introduces specific risks that compliance teams must address proactively. The 77% of organizations that faced AI-related breaches in 2024 experienced vulnerabilities stemming from vendor AI models, including prompt injection attacks, algorithmic bias, and supply chain compromises. These risks are not theoretical. They manifest in real compliance failures, regulatory penalties, and reputational damage.

Security questionnaires have evolved to capture these threats. Modern assessments now include over 80 AI-specific questions designed to evaluate vendor compliance with standards like NIST AI RMF and OWASP LLM Top 10. These questions probe model training data quality, bias mitigation strategies, explainability mechanisms, and incident response protocols. Organizations that rely on generic checklists without dynamic audits leave themselves exposed to AI privacy and fairness risks that regulators increasingly scrutinize.

Human oversight remains essential to mitigate AI risks such as bias, hallucinations, and lack of explainability. AI models can produce probabilistic outputs that lack the deterministic, auditable results compliance frameworks demand. Without human validation, organizations risk accepting AI-generated responses that fail regulatory standards or misrepresent actual controls. Compliance demands shift from black-box automation to transparent, explainable AI systems where humans retain decision authority.

Key AI risks in compliance management include:

- Prompt injection and adversarial attacks that manipulate AI model outputs

- Algorithmic bias that produces discriminatory or unfair results for vulnerable populations

- Model drift where AI performance degrades over time without continuous monitoring

- Supply chain vulnerabilities in third-party AI components and training data

- Lack of explainability that prevents auditors from understanding AI decision logic

As one compliance expert noted:

"AI-related risks require a paradigm shift from static compliance checklists to dynamic, continuous monitoring frameworks that balance automation with human judgment."

Understanding these risks provides the context for implementing AI solutions that enhance efficiency without compromising compliance integrity. The next section explores how AI addresses security questionnaire challenges with AI through intelligent automation.

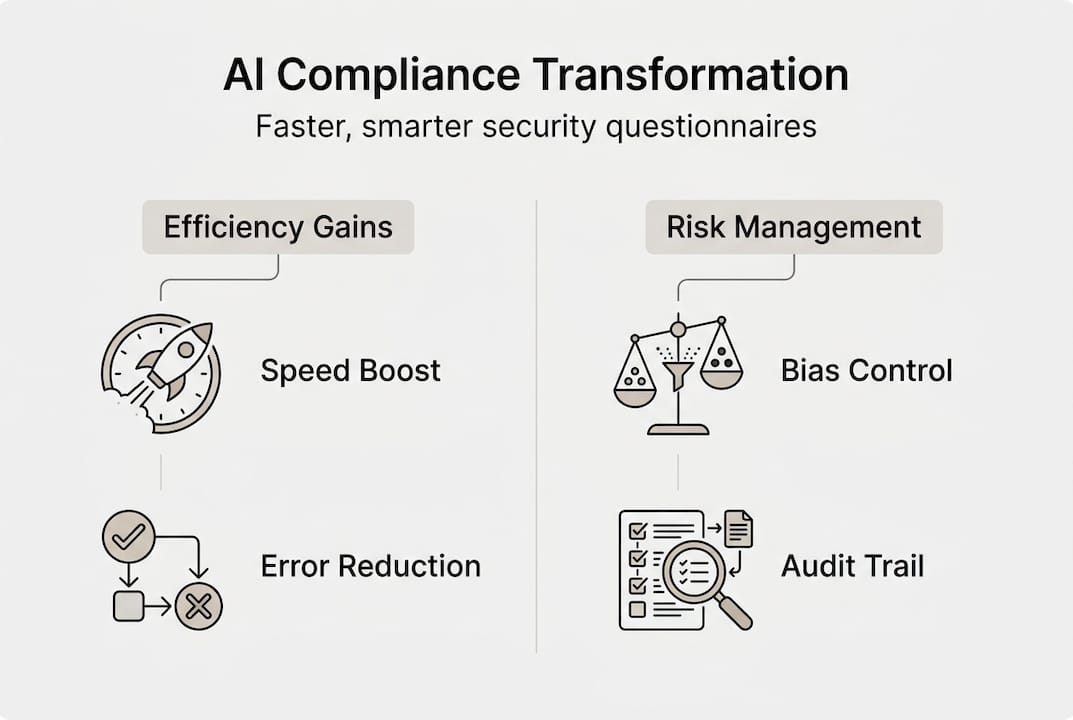

How AI enhances security questionnaire efficiency and accuracy

AI-driven automation transforms security questionnaire workflows from labor-intensive manual processes into streamlined, intelligent systems. AI reduces SOC 2 compliance time by 25-35% and questionnaire completion time by 60-80%, delivering substantial efficiency gains without sacrificing accuracy. These improvements stem from hybrid workflows where AI auto-fills 70-90% of questionnaire responses while humans validate outputs for compliance defensibility.

AI-powered recommendation engines analyze historical responses, organizational documentation, and regulatory frameworks to suggest contextually appropriate answers. These engines learn from validated responses, improving accuracy over time. Easy import and export workflows enable seamless collaboration across compliance, legal, and technical teams, eliminating version control issues and communication gaps that plague manual processes.

Real-world implementations demonstrate dramatic results. Organizations using security questionnaire automation tools report accelerated deal closures, reduced compliance overhead, and improved vendor relationship management. One financial services firm cut questionnaire response time from 40 hours to 8 hours per assessment, freeing compliance staff for strategic risk analysis rather than data entry.

The efficiency gap between manual and AI-driven workflows is substantial:

| Process Step | Manual Time | AI-Driven Time | Time Savings |

|---|---|---|---|

| Initial questionnaire review | 2-3 hours | 15-20 minutes | 85-90% |

| Response drafting | 20-30 hours | 2-4 hours | 85-90% |

| Cross-team collaboration | 8-12 hours | 1-2 hours | 85-90% |

| Quality review and validation | 4-6 hours | 2-3 hours | 40-50% |

| Final submission preparation | 2-3 hours | 30-45 minutes | 70-80% |

To implement AI automation in questionnaire processing, follow these steps:

- Audit existing questionnaire workflows to identify repetitive, high-volume response patterns suitable for automation.

- Centralize organizational documentation, policies, and previous questionnaire responses in a structured knowledge base that AI can access.

- Deploy AI tools that support your questionnaire formats and integrate with existing compliance platforms like OneTrust or ServiceNow.

- Establish human-in-the-loop validation protocols where subject matter experts review AI-generated responses before submission.

- Monitor AI performance metrics including accuracy rates, time savings, and validation rejection rates to continuously improve the system.

- Train compliance teams on AI tool capabilities and limitations to ensure appropriate use and oversight.

Pro Tip: Start with lower-risk, high-volume questionnaires to build confidence in AI accuracy before expanding to complex, high-stakes assessments. This phased approach allows teams to refine validation protocols and build institutional knowledge.

Organizations that automate security questionnaires for faster responses report not just time savings but improved response quality. AI eliminates inconsistencies that arise when different team members answer similar questions differently across assessments. Standardized, validated responses strengthen compliance posture and reduce audit findings. The questionnaire automation that cuts compliance time by 95% demonstrates the transformative potential of well-implemented AI systems.

Navigating regulatory frameworks with AI-driven assessments

Regulatory frameworks increasingly shape how organizations deploy AI in compliance management. The EU AI Act requires comprehensive risk management systems, high-quality datasets, transparency, post-market monitoring, and human oversight for high-risk AI applications. These requirements directly impact how compliance teams structure vendor risk assessments and security questionnaires.

The EU AI Act mandates that high-risk AI vendors implement risk management systems (RMS) that identify, assess, and mitigate risks throughout the AI lifecycle. Technical documentation must detail model architecture, training data provenance, performance metrics, and limitations. Logging requirements ensure auditability by capturing inputs, outputs, and decision logic. These obligations transform security questionnaires from simple yes/no checklists into detailed assessments of AI governance maturity.

Conformity assessments can follow internal procedures (Annex VI) for most high-risk AI systems or require third-party assessment (Annex VII) for specific applications like biometric identification. Post-market monitoring obligations require vendors to continuously track AI performance, identify emerging risks, and report serious incidents to authorities. Security questionnaires aligned to these regulations help organizations identify compliance gaps early, before they escalate into regulatory violations or operational failures.

Key EU AI Act requirements map to questionnaire categories as follows:

| EU AI Act Requirement | Questionnaire Category | Assessment Focus |

|---|---|---|

| Risk Management System | Governance and Controls | RMS documentation, risk identification processes, mitigation strategies |

| Data Governance | Data Privacy and Quality | Training data sources, quality assurance, bias testing, data lineage |

| Technical Documentation | Architecture and Design | Model specifications, performance metrics, limitations, validation results |

| Transparency and Explainability | AI Ethics and Fairness | Explainability mechanisms, user disclosure, decision transparency |

| Human Oversight | Operational Controls | Human-in-the-loop protocols, override capabilities, monitoring procedures |

| Post-Market Monitoring | Incident Response | Monitoring systems, incident reporting, performance tracking, update procedures |

Organizations should streamline questionnaire self-assessment for security by mapping internal AI governance practices to regulatory requirements before vendor assessments begin. This proactive approach identifies control gaps and enables remediation before external scrutiny. Internal assessments also establish baseline expectations for vendor responses, making it easier to evaluate third-party AI compliance.

Pro Tip: Maintain a living repository of regulatory requirement mappings that links specific EU AI Act articles to questionnaire sections and internal control documentation. This repository accelerates both self-assessments and vendor evaluations while ensuring consistency across assessments.

Beyond the EU AI Act, organizations must consider sector-specific regulations like GDPR for data privacy, financial services regulations for algorithmic trading, and healthcare regulations for clinical decision support. Best practices for automating questionnaires include building flexibility to accommodate multiple regulatory frameworks simultaneously, ensuring AI tools can adapt questionnaire templates to different compliance contexts.

Regulatory alignment transforms security questionnaires from administrative burdens into strategic compliance tools. Organizations that embed regulatory requirements into questionnaire design gain early visibility into vendor compliance maturity, enabling informed risk decisions before contractual commitments.

Best practices for governance and risk mitigation with AI in compliance

Strong governance separates successful AI implementations from compliance failures. Despite AI's potential, only 25% of organizations have strong AI governance, with governance lag causing data issues and lack of expertise widespread across industries. Effective governance requires explainable AI models, continuous bias audits, and human-in-the-loop controls that maintain accountability and transparency.

Explainable AI (XAI) enables compliance teams to understand how models generate recommendations, making it possible to validate outputs against regulatory requirements and organizational policies. Without explainability, auditors cannot verify that AI decisions align with compliance obligations, creating regulatory risk. XAI techniques like feature importance analysis, decision trees, and counterfactual explanations provide the transparency regulators increasingly demand.

Continuous bias audits prevent AI systems from producing discriminatory outcomes that violate fairness principles and regulatory standards. Bias can enter AI models through training data, feature selection, or algorithmic design. Regular audits test model outputs across demographic groups, geographic regions, and other relevant categories to identify disparate impacts. When bias is detected, governance protocols must trigger model retraining or output adjustments to restore fairness.

Common pitfalls undermine AI governance in compliance:

- Over-reliance on AI automation without human validation leads to accepting inaccurate or non-compliant responses

- Insufficient monitoring of model drift allows AI performance to degrade undetected over time

- Lack of cross-functional collaboration isolates AI expertise from compliance knowledge, creating blind spots

- Inadequate documentation prevents auditors from understanding AI decision logic and control effectiveness

- Failure to establish clear accountability for AI outputs creates confusion when errors occur

Key governance components for compliant AI-driven processes include:

- Model governance framework defining approval processes, performance thresholds, and retirement criteria

- Data governance policies ensuring training data quality, lineage, and privacy protection

- Human oversight protocols specifying when human validation is required and who has override authority

- Continuous monitoring systems tracking model accuracy, bias metrics, and operational performance

- Incident response procedures for addressing AI errors, security breaches, or compliance violations

- Regular audits and assessments validating AI controls against regulatory requirements

- Cross-functional AI governance committees with representation from compliance, legal, IT, and business units

Organizations that streamline security questionnaire workflows embed governance controls directly into AI tools, making compliance the default rather than an afterthought. Automated validation checks flag responses that deviate from established policies, triggering human review before submission. Version control and audit trails document who reviewed and approved each response, supporting regulatory examinations.

Pro Tip: Invest in cross-functional training that builds AI literacy among compliance professionals and compliance knowledge among AI practitioners. This shared understanding enables more effective collaboration and reduces the risk of governance gaps between technical implementation and regulatory requirements.

Effective governance also requires understanding how to answer security questionnaires effectively by balancing completeness with clarity, providing evidence without overwhelming reviewers, and maintaining consistency across assessments. AI tools support these goals by standardizing response formats, linking answers to supporting documentation, and flagging inconsistencies that require resolution.

Governance is not a one-time implementation but an ongoing practice that evolves with AI capabilities, regulatory requirements, and organizational risk appetite. Organizations that treat governance as a strategic enabler rather than a compliance checkbox position themselves to scale AI confidently while managing risks effectively.

Enhance your compliance process with Skypher's AI tools

Transforming compliance management from manual burden to strategic advantage requires the right technology partner. Skypher's security questionnaire automation platform implements the AI-driven strategies discussed throughout this article, delivering measurable efficiency gains while maintaining regulatory compliance and audit readiness.

Skypher's AI-powered recommendation engine analyzes your organizational documentation, previous questionnaire responses, and regulatory frameworks to suggest contextually accurate answers. The platform learns from validated responses, continuously improving recommendation quality. Easy import and export workflows enable seamless collaboration across compliance, legal, and technical teams, eliminating version control issues and accelerating review cycles. Organizations report reducing questionnaire completion time by 60-80% while improving response consistency and quality.

Frequently asked questions

What are the main AI risks to consider in compliance management?

Bias, prompt injection, lack of explainability, and model drift represent the primary AI risks in compliance. Bias can produce discriminatory outcomes that violate fairness principles and regulations. Prompt injection attacks manipulate AI outputs to bypass controls or extract sensitive information. Lack of explainability prevents auditors from validating AI decisions against compliance requirements. Model drift degrades AI performance over time without continuous monitoring. Compliance with regulations like the EU AI Act requires comprehensive risk management systems, transparency mechanisms, and human oversight to mitigate these risks effectively.

How can AI improve security questionnaire response processes?

AI automates up to 90% of questionnaire responses through intelligent recommendation engines that analyze organizational documentation and historical answers. This automation reduces manual effort, eliminates inconsistencies, and accelerates completion time by 60-80%. Hybrid workflows ensure human validation for compliance defensibility while capturing efficiency gains. AI tools also enable seamless collaboration across teams through easy import and export capabilities, version control, and audit trails that support regulatory examinations.

What steps should organizations take to ensure compliance with the EU AI Act?

Organizations must implement risk management systems that identify and mitigate AI risks throughout the lifecycle. Maintain comprehensive technical documentation detailing model architecture, training data, performance metrics, and limitations. Conduct conformity assessments following Annex VI internal procedures or Annex VII third-party assessment requirements. Enable post-market monitoring systems that track AI performance, identify emerging risks, and report serious incidents. Establish human oversight protocols ensuring humans retain decision authority and can override AI outputs when necessary. Use AI-aligned security questionnaires to assess vendor compliance and identify gaps early.

Why is governance important when using AI in compliance?

Strong governance ensures explainability, bias mitigation, auditability, and reduces operational risks from AI deployment. Only 25% of organizations have adequate AI governance, with governance lag causing data errors and regulatory non-compliance. Effective governance includes model approval processes, continuous monitoring, human oversight protocols, and cross-functional collaboration between AI and compliance teams. Without governance, organizations risk accepting inaccurate AI outputs, failing to detect model drift, and violating regulatory requirements that demand transparency and accountability in AI decision-making.