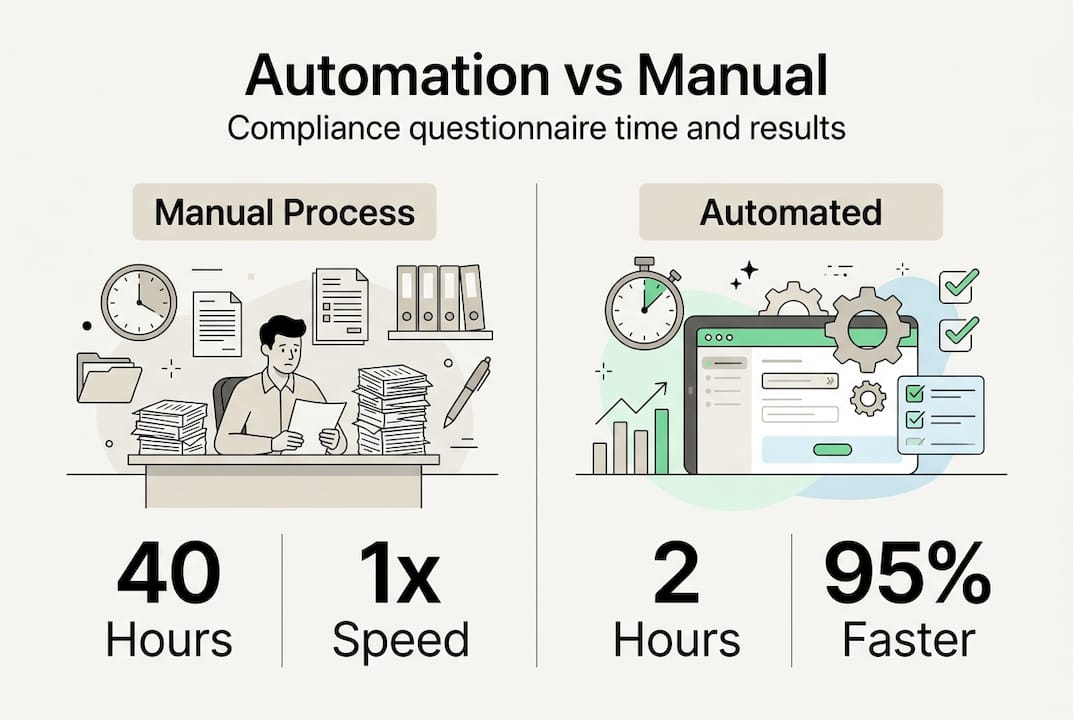

Security questionnaires consume thousands of hours yearly for cybersecurity teams at tech and finance organizations. Manual processing of a single comprehensive questionnaire takes 20 to 40 hours, delaying vendor approvals and straining subject matter experts. Questionnaire automation uses AI to compress this timeline to under 4 hours, cutting effort by up to 95%. This article explains how automation works, quantifies its benefits through real benchmarks, addresses implementation challenges, and provides practical deployment guidance for compliance officers seeking to transform their security review workflows.

Table of Contents

- Key takeaways

- Understanding questionnaire automation in cybersecurity

- Benefits and performance benchmarks of automation versus manual processing

- Challenges, nuances, and expert best practices for effective deployment

- Implementing questionnaire automation: practical steps and integration tips

- Streamline your security questionnaires with Skypher's AI solutions

- FAQ

Key Takeaways

| Point | Details |

|---|---|

| Time savings | Automation cuts questionnaire completion from 20 to 40 hours to under 4 hours, reducing effort by up to 95 percent. |

| Source citations | The system drafts answers with citations tied to source materials for verification. |

| Hybrid validation | Human reviewers validate high confidence answers and handle edge cases to maintain accuracy. |

| Version control | Strict version control and audit trails ensure accurate responses and traceability for auditors. |

Understanding questionnaire automation in cybersecurity

Questionnaire automation applies artificial intelligence to parse, analyze, and respond to security questionnaires without manual data entry. The technology relies on natural language processing and retrieval augmented generation to interpret questions, search centralized knowledge repositories, and draft responses with supporting evidence. Organizations build knowledge bases by tagging compliance documentation such as SOC 2 reports, ISO 27001 controls, penetration test results, and policy manuals. AI systems then parse incoming questionnaires in formats ranging from Excel spreadsheets to OneTrust portals, extracting individual questions for processing.

Semantic matching algorithms compare each question against the knowledge base, identifying the most relevant documentation and control statements. The system retrieves context, drafts an answer, and appends citations linking to source materials. Human reviewers validate responses, approve high confidence answers immediately, and refine edge cases requiring nuanced judgment. This hybrid approach balances speed with accuracy, ensuring compliance rigor while eliminating repetitive manual labor. Understanding what is a cybersecurity questionnaire and why automate it helps teams recognize where automation delivers maximum value.

The automation workflow follows these core steps:

- Upload questionnaire in any format including Excel, Word, PDF, or portal integrations

- AI parses and extracts individual questions using proprietary models

- Semantic matching searches the knowledge base for relevant controls and evidence

- System drafts responses with citations linking to source documentation

- Human reviewers approve, edit, or escalate questions requiring subject matter expert input

- Finalized responses export to original format or integrate directly into third party risk management platforms

Core technology components powering automation include:

- Natural language processing engines that interpret question intent and context

- Retrieval augmented generation models that ground answers in verified documentation

- Document vectorization systems that chunk and index knowledge base content

- Semantic search algorithms that match questions to relevant controls

- Version control mechanisms that track knowledge base updates and maintain audit trails

- Integration APIs connecting to platforms like OneTrust, ServiceNow, and Slack

Pro Tip: Maintain strict version control on your knowledge base with timestamps and change logs. When auditors or customers request evidence, you need to demonstrate which version of a policy or control was active when you submitted a specific questionnaire response. This audit trail protects your organization and builds trust with stakeholders. Exploring cybersecurity questionnaire essentials AI driven approaches provides deeper insights into implementation best practices.

Benefits and performance benchmarks of automation versus manual processing

Automation transforms questionnaire workflows from labor intensive bottlenecks into streamlined processes that accelerate vendor approvals and sales cycles. Manual processing requires 20 to 40 hours per comprehensive questionnaire, with turnaround times stretching to weeks as subject matter experts juggle competing priorities. Automation compresses this timeline to 1 to 4 hours, enabling same day or next day responses that keep deals moving forward. Organizations processing dozens of questionnaires monthly reclaim thousands of hours annually, redirecting expertise toward strategic security initiatives rather than repetitive data entry.

Real world case studies demonstrate measurable impact. LILT achieved 74% auto answer rates on security questionnaires, allowing their small team to handle enterprise customer volumes without scaling headcount. Amplitude multiplied throughput by 4x, completing questionnaires in days rather than weeks and accelerating a $12M deal that hinged on rapid security review completion. These improvements stem from AI accuracy rates exceeding 90% on standard questions, with human review layers catching edge cases and maintaining quality standards.

| Metric | Manual Processing | Automated Processing |

|---|---|---|

| Time per questionnaire | 20 to 40 hours | 1 to 4 hours |

| Turnaround time | 2 to 4 weeks | 1 to 2 days |

| Auto answer rate | 0% | 70% to 90% |

| Subject matter expert effort | 100% questions | 10% to 30% questions |

| Annual capacity (per team member) | 50 to 100 questionnaires | 200 to 400 questionnaires |

| Response consistency | Variable | Standardized |

Key benefits driving adoption include:

- Time savings freeing subject matter experts for strategic security work

- Cost reduction through labor efficiency and faster sales cycles

- Scalability enabling small teams to handle enterprise questionnaire volumes

- Faster sales enablement reducing proof of concept and contract timelines

- Improved consistency through standardized answers and centralized knowledge

- Enhanced accuracy via evidence linking and version controlled documentation

Organizations implementing questionnaire automation report 95% time savings and 4x throughput improvements, transforming security reviews from bottlenecks into competitive advantages that accelerate revenue.

Teams looking to automate security questionnaires faster responses find that proper implementation delivers ROI within the first quarter through deal acceleration and resource optimization. The AI advantages cybersecurity questionnaire automation extend beyond speed, improving accuracy and enabling data driven insights into common customer concerns.

Challenges, nuances, and expert best practices for effective deployment

Automation introduces risks that require proactive mitigation strategies to maintain compliance integrity and customer trust. Outdated knowledge bases produce inaccurate responses when security controls evolve but documentation lags behind. AI hallucinations risk inaccuracies when models generate plausible sounding answers unsupported by actual evidence, potentially misrepresenting your security posture to customers and auditors. Custom question formats and ambiguous phrasing challenge semantic matching algorithms, requiring human judgment to interpret intent correctly. Organizations with weak underlying security practices face the temptation to automate responses that overstate capabilities, creating legal and reputational exposure.

| Consideration | Automation Approach | Manual Approach | Common Pitfalls |

|---|---|---|---|

| Knowledge base accuracy | Requires continuous updates and version control | Subject matter experts recall current state | Stale documentation produces wrong answers |

| Edge case handling | AI flags low confidence for human review | Expert judgment on all questions | Over reliance on AI without validation |

| Evidence linking | Automated citation to source controls | Manual attachment gathering | Missing or broken evidence links |

| Custom questions | Semantic matching with fallback to human | Expert interpretation | AI misinterprets ambiguous phrasing |

| Audit trail | Automated logging of all changes | Manual documentation | Insufficient tracking for compliance |

Defensive practices that maximize automation benefits while maintaining rigor include:

- Centralized knowledge base updates with designated owners for each domain

- Evidence linking that connects every answer to specific controls and documentation

- Version control systems tracking when policies and controls change

- Subject matter expert reviews for questions flagged as low confidence or high risk

- Regular validation testing using real questionnaires before production deployment

- Audit trails logging who approved each answer and when

- Continuous accuracy monitoring comparing AI suggestions against expert corrections

Pro Tip: Run automation in shadow mode initially, generating draft responses that subject matter experts review against their manual answers. This parallel processing builds confidence in AI accuracy, identifies knowledge base gaps, and trains your team on effective review workflows before full deployment. Hybrid models with human checkpoints and version control mitigate risks while preserving speed advantages.

High risk scenarios demand human oversight regardless of AI confidence scores. Questions about incident response procedures, data breach history, or specific regulatory compliance require expert validation to avoid misstatements that could trigger contract disputes or regulatory scrutiny. Organizations overcome security questionnaire AI challenges by establishing clear escalation criteria and maintaining subject matter expert engagement on complex topics. Following best practices for automating security questionnaires ensures quality while capturing efficiency gains.

Implementing questionnaire automation: practical steps and integration tips

Successful automation projects follow a structured roadmap from preparation through continuous improvement. Organizations achieve fastest time to value by focusing initial efforts on knowledge base quality and incremental rollout rather than attempting full automation immediately. Start with centralizing and tagging a version controlled knowledge base, using security trained AI models, and integrating human checkpoints at decision points requiring judgment.

Implementation roadmap:

- Assess current questionnaire volume, processing time, and pain points to establish baseline metrics

- Centralize compliance documentation including SOC 2 reports, ISO certifications, policies, and control evidence

- Tag knowledge base content with metadata enabling semantic search across security domains

- Select AI automation tools offering integration with your third party risk management platforms

- Configure workflows routing low confidence questions to appropriate subject matter experts

- Test automation using 5 to 10 recent questionnaires, comparing AI responses against manual answers

- Train reviewers on approval workflows and escalation criteria for edge cases

- Deploy automation in production starting with lower risk questionnaires

- Monitor accuracy metrics and subject matter expert feedback to identify knowledge base gaps

- Iterate on tagging, evidence linking, and workflow rules based on real usage patterns

Integration tips maximize automation value by connecting questionnaire workflows to broader compliance systems. Link automation platforms to live control monitoring tools so answers reflect current security posture rather than static documentation. Ensure audit trail integration captures who approved each response, when approvals occurred, and which knowledge base version supported the answer. Configure notifications alerting subject matter experts when high priority questionnaires require review, preventing bottlenecks from unmonitored queues.

Pro Tip: Involve subject matter experts early in knowledge base tagging and workflow design. Their domain expertise identifies which questions require human judgment versus safe automation. Early engagement builds trust in the system and creates advocates who champion adoption across the organization. Teams that streamline security questionnaire workflow through collaborative implementation see faster adoption and higher accuracy than top down deployments.

Continuous improvement sustains automation value as security practices evolve. Establish regular knowledge base review cycles tied to control changes, policy updates, and audit findings. Track which questions frequently require manual correction, using these patterns to refine semantic matching rules and expand knowledge base coverage. Monitor customer feedback on response quality, addressing gaps that could impact deal progression or customer trust. Organizations following best practices for automating questionnaires treat automation as an ongoing program requiring investment in knowledge management and process refinement.

Streamline your security questionnaires with Skypher's AI solutions

Skypher transforms security questionnaire workflows through AI powered automation that combines speed with compliance rigor. Our platform parses questionnaires in any format using proprietary models stronger than generic AI tools, mapping questions to your centralized knowledge base and generating draft responses with evidence citations in under 1 minute for even 200 question assessments. AI security questionnaire automation integrates with over 40 third party risk management platforms including OneTrust and ServiceNow, enabling seamless workflows across your existing toolchain.

Key capabilities accelerating your compliance workflows include:

- Proprietary AI models parsing every questionnaire format with higher reliability than generic tools

- Semantic search across Confluence, Notion, Google Drive, OneDrive, and SharePoint documentation

- Automated review cycles and duplicate detection preventing redundant work

- AI powered recommendation engine suggesting improvements based on customer patterns

- Real time collaboration through Slack and Microsoft Teams integrations

- Multilingual support for global compliance requirements

- Version controlled knowledge bases with complete audit trails

Pro Tip: Schedule a demo to see how Skypher handles your actual questionnaires, demonstrating accuracy on your specific security controls and documentation. Seeing the platform process real questions from your pipeline builds confidence in automation quality and helps identify knowledge base preparation needed before deployment.

FAQ

What is questionnaire automation in cybersecurity?

Questionnaire automation uses artificial intelligence to parse security questionnaires, match questions to centralized knowledge bases, and generate draft responses with supporting evidence citations. The technology combines natural language processing and retrieval augmented generation to interpret question intent and retrieve relevant controls automatically. This approach reduces manual data entry while maintaining compliance rigor through human review workflows for complex or high risk questions.

How much time can automation save compared to manual processing?

Automation reduces questionnaire completion from 20 to 40 hours down to 1 to 4 hours, cutting effort by up to 95%. Turnaround times improve from 2 to 4 weeks to 1 to 2 days, enabling same day responses that accelerate vendor approvals and sales cycles. Organizations processing dozens of questionnaires monthly reclaim thousands of hours annually, redirecting subject matter experts toward strategic security initiatives rather than repetitive documentation tasks.

What are common challenges when implementing questionnaire automation?

Key challenges include outdated knowledge bases producing inaccurate responses, AI hallucinations generating plausible but unsupported answers, custom question formats confusing semantic matching, and edge cases requiring human judgment. Organizations also face risks from over reliance on automation without validation, insufficient evidence linking, and inadequate audit trails. A hybrid human AI approach with regular knowledge base updates, version control, and subject matter expert review workflows mitigates these issues while preserving efficiency gains.

How can organizations ensure accurate and compliant responses?

Use version controlled, centrally tagged knowledge bases that link answers to specific controls and evidence documentation. Implement human review workflows routing low confidence or high risk questions to subject matter experts for validation. Maintain comprehensive audit trails logging who approved each response, when approvals occurred, and which knowledge base version supported the answer. Regular validation testing comparing AI suggestions against expert corrections identifies gaps and refines semantic matching accuracy over time.