TL;DR:

- Most AI initiatives in security compliance often fail due to poor data quality, lack of governance, and unclear success criteria.

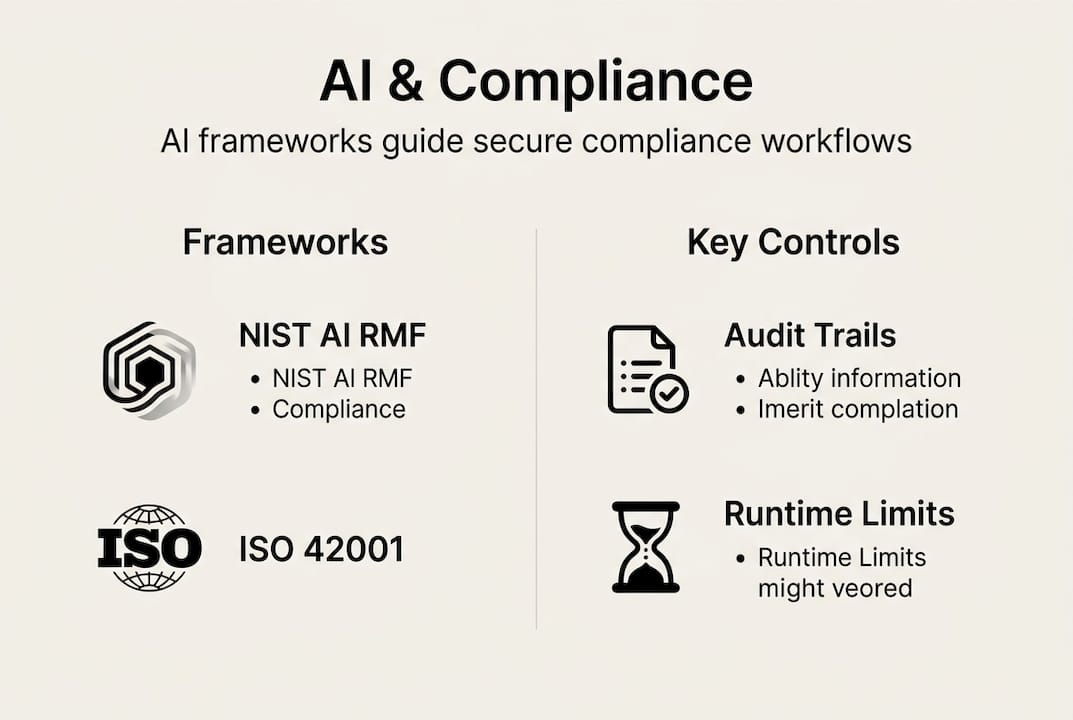

- Specialized, purpose-built AI tools aligned with industry frameworks like NIST, ISO 42001, and EU AI Act are key to effective compliance automation.

- Implementing structured maturity models and feedback loops helps organizations systematically move from manual to intelligent AI-driven security questionnaire workflows.

Most organizations assume that buying an AI tool is the same as solving a compliance problem. It is not. A striking number of AI initiatives in security and compliance never move past the pilot stage, and those that do often fail to generate measurable returns. For compliance and risk management professionals in tech and finance, this is not just a technology problem. It is a strategy problem. This guide cuts through the noise and gives you a practical, evidence-backed roadmap for making AI work in your security questionnaire workflows, from understanding the right frameworks to operationalizing automation that your team will actually trust.

Table of Contents

- Understanding the evolving intersection of AI and security compliance

- Key frameworks guiding AI-driven compliance

- From aspiration to reality: AI adoption gaps and maturity models

- Best practices for operationalizing AI in security questionnaires

- Our perspective: Why AI in security compliance is more than automation

- Ready to streamline your compliance process with AI?

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| High AI failure rates | Over half of AI projects in security compliance fail to deliver expected value without clear frameworks. |

| Frameworks enable trust | Aligning with NIST, ISO, and EU AI Act frameworks is key to reliable, compliant AI adoption. |

| Maturity models bridge gaps | Using maturity models and feedback helps organizations move from manual processes to effective AI automation. |

| Operational excellence matters | Continuous monitoring and human-AI collaboration are crucial for sustainable, trustworthy compliance solutions. |

Understanding the evolving intersection of AI and security compliance

Security questionnaires are one of the most labor-intensive tasks in third-party risk management (TPRM). A single enterprise vendor assessment can involve hundreds of questions, multiple reviewers, and weeks of back-and-forth. AI promises to change that, and in many cases it does. But the gap between promise and delivery is wider than most teams expect.

The data is sobering. Only 18% of production AI projects deliver significant business value, according to IBM research cited alongside Gartner's finding that 53% of AI prototypes even reach production. That means the majority of AI investments stall before they create any real impact. For compliance teams under pressure to reduce review cycles and cut costs, that is a critical risk.

Why does this happen? The most common failure points are:

- Unclear success criteria: Teams deploy AI without defining what "better" looks like in measurable terms.

- Poor data quality: AI models trained on inconsistent or outdated security documentation produce unreliable outputs.

- Lack of governance: Without human oversight built into the workflow, errors compound and trust erodes.

- Vendor overpromising: Generic large language models are not purpose-built for the nuanced language of security controls.

The AI's impact on security questionnaires is most visible when organizations treat it as a process redesign, not a plug-in. Teams that succeed tend to start with a narrow, well-defined use case, measure outcomes rigorously, and expand only after proving value.

| AI adoption stage | % of organizations | Typical outcome |

|---|---|---|

| Pilot or prototype | ~47% | Limited production use |

| Production deployed | ~53% | Mixed results |

| Delivering significant value | ~18% | Measurable ROI |

For 2026, the trend is shifting toward specialized AI tools with built-in compliance logic, audit trails, and domain-specific training. Generic AI is losing ground to purpose-built platforms that understand the structure of frameworks like SOC 2, ISO 27001, and NIST CSF. Organizations that align with industry benchmarks for data security maturity are better positioned to extract real value from their AI investments.

Key frameworks guiding AI-driven compliance

Understanding why AI adoption has lagged behind expectations means examining the rules and frameworks that compliance teams must respect. In 2026, three frameworks dominate the conversation: the NIST AI Risk Management Framework (AI RMF), ISO 42001, and the EU AI Act. These three frameworks provide governing principles and controls that shape how organizations can responsibly deploy AI in compliance workflows.

Here is how each one maps to security questionnaire automation:

- NIST AI RMF: A voluntary U.S. framework organized around four functions: Govern, Map, Measure, and Manage. For security questionnaire teams, it provides a practical structure for identifying AI risks, setting performance benchmarks, and establishing accountability.

- ISO 42001: An international standard for AI management systems. It mirrors the structure of ISO 27001, making it familiar to security teams. It requires documented policies, risk assessments, and continuous improvement cycles, all of which apply directly to automated questionnaire workflows.

- EU AI Act: A binding regulation that classifies AI systems by risk level. Security questionnaire automation generally falls into lower-risk categories, but systems that influence credit, insurance, or employment decisions face stricter requirements.

| Framework | Origin | Binding? | Key focus for compliance teams |

|---|---|---|---|

| NIST AI RMF | U.S. | Voluntary | Risk governance and measurement |

| ISO 42001 | International | Certifiable | AI management system |

| EU AI Act | EU | Mandatory (in EU) | Risk classification and oversight |

For international teams, the challenge is layering these frameworks without creating redundancy. The good news is that they share significant overlap in core principles: transparency, human oversight, and ongoing monitoring. Mapping your AI in security reviews process to all three simultaneously is more achievable than it sounds when you start with a unified control set.

Runtime controls are another critical consideration. Each framework emphasizes that AI systems must operate within defined boundaries, with humans able to intervene, correct, and audit outputs. For security questionnaire automation, this means your AI tool should produce explainable answers, flag low-confidence responses, and maintain a complete audit trail.

From aspiration to reality: AI adoption gaps and maturity models

Now that you understand the frameworks, it is crucial to assess where your organization falls on the AI adoption journey and where the common hurdles lie. Gartner and Deloitte both highlight the aspiration-versus-reality gap and recommend structured maturity models to help organizations make systematic progress.

Most TPRM teams sit at one of three maturity levels:

- Level 1 (Manual): Questionnaires are handled entirely by humans, often using spreadsheets and email. Response times are slow, and quality depends on individual expertise.

- Level 2 (Assisted): Some automation exists, such as answer libraries or template matching, but human review is still required for most responses. Inconsistency remains a problem.

- Level 3 (Intelligent): AI models generate contextually accurate answers, flag anomalies, and integrate with TPRM platforms. Human oversight is focused on exceptions, not routine tasks.

Moving from Level 1 to Level 3 is not a single leap. It requires deliberate investment in data quality, model training, and process redesign. The organizations that fail are usually those that try to skip Level 2 entirely.

"The biggest mistake we see is organizations buying an enterprise AI platform before they have clean, structured knowledge bases to feed it. The AI is only as good as the data behind it."

Pro Tip: Before evaluating any AI vendor, audit your existing security documentation. If your policies, controls, and past questionnaire responses are scattered across email threads and shared drives, fix that first. A well-organized knowledge base is the single biggest predictor of AI success in compliance.

For AI risk management to work in practice, you also need feedback loops. Every time a reviewer edits or overrides an AI-generated answer, that correction should feed back into the model. Without this, accuracy plateaus and teams lose confidence in the tool.

Best practices for operationalizing AI in security questionnaires

With a maturity model in mind, you will want to translate theory into daily practice. Here is how teams can operationalize AI for measurable security compliance results, drawing on transforming compliance workflows that leading tech and finance organizations have already validated.

Gartner's six-stage AI security workflow covers data acquisition, model training, output generation, deployment, compliance monitoring, and feedback. Here is how to apply each stage practically:

- Data acquisition: Centralize all security policies, past questionnaire responses, and control evidence in a single, searchable repository.

- Model training: Use domain-specific training data. Generic models do not understand the difference between a SOC 2 Type II control and a GDPR Article 32 requirement.

- Output generation: Configure the AI to produce answers with confidence scores. Low-confidence answers should automatically route to a human reviewer.

- Deployment: Integrate the AI tool with your existing TPRM platforms, ticketing systems, and collaboration tools to minimize workflow disruption.

- Compliance monitoring: Set up automated alerts for regulatory changes that could affect your standard answers. Stale responses are a significant audit risk.

- Feedback loops: Build a structured review process where every human correction improves the model over time.

Pro Tip: Finance teams running third-party vendor assessments report the biggest time savings when they prioritize automating the most frequently repeated questions first. Start with your top 20% of recurring questions and you will cover roughly 80% of your response volume.

Operational pitfalls to avoid include over-relying on AI for high-stakes responses without review, neglecting to update your knowledge base after policy changes, and failing to document AI-generated answers for audit purposes. Trust is built incrementally, and teams that communicate AI limitations honestly tend to see faster adoption from reviewers.

Our perspective: Why AI in security compliance is more than automation

Here is the uncomfortable truth that most AI vendors will not tell you: speed is not the goal. Accuracy and trust are. We have seen organizations automate their way into compliance fatigue, where questionnaire volume increases because the tool makes it easy, but the quality of risk decisions actually declines because human judgment gets bypassed.

The teams that get lasting value from AI in security compliance treat it as a smart teammate, not an infallible system. They use AI to handle the repetitive, well-defined work, and they free up their best people to focus on the ambiguous, high-stakes decisions that actually require expertise. That is a fundamentally different mindset from "let the AI handle it."

Responsible AI in compliance also means auditing your model's behavior regularly. Bias can creep in when training data over-represents certain vendor types or control frameworks. Human oversight is not a checkbox. It is the mechanism that keeps your compliance program trustworthy. For teams thinking about long-term AI compliance benefits, the real return on investment comes from combining AI efficiency with the kind of expert judgment that no model can replicate.

Ready to streamline your compliance process with AI?

If you are ready to move from theory to action, the right tooling makes all the difference. Skypher's AI questionnaire automation platform is purpose-built for compliance teams in tech and finance, with proprietary AI models that parse every questionnaire format and generate accurate, audit-ready responses in under a minute.

With automated review and detection built in, your team spends less time on repetitive tasks and more time on the decisions that matter. Skypher integrates with over 40 TPRM platforms, Slack, MS Teams, and your existing knowledge management tools. Explore the full Skypher platform and see how leading organizations are turning security questionnaires from a bottleneck into a competitive advantage.

Frequently asked questions

Which AI frameworks are most critical for security compliance in 2026?

The NIST AI RMF, ISO 42001, and EU AI Act are the foundational frameworks for AI-driven security compliance, each defining risk management, oversight, and accountability standards that directly apply to questionnaire automation.

Why do most AI initiatives in security compliance fail to deliver value?

Most failures trace back to poor data quality, missing governance structures, and the absence of feedback loops. Only 18% of production AI projects deliver significant value, which means deployment alone is not enough without continuous oversight and iteration.

How can organizations close the AI maturity gap for security compliance?

Using structured AI maturity models and building feedback loops into every review cycle allows teams to progress systematically from manual processes to intelligent automation without skipping critical steps.

What are the main workflow stages for operationalizing AI in compliance?

The six-stage AI security workflow covers data acquisition, model training, output generation, deployment, compliance monitoring, and feedback loops, each of which must be implemented deliberately for AI to deliver consistent compliance value.

Recommended

- How AI transforms compliance for security questionnaires

- How AI-driven security questionnaires transform compliance

- How AI transforms security reviews: efficiency, accuracy, challenges

- Automated Compliance: Transforming Security Reviews

- AI in Recruitment – Transforming Candidate Screening and Fit | We Are Over The Moon