TL;DR:

- Well-structured, repeatable audit processes reduce surprises and improve operational trust.

- Automating evidence collection and control mapping enhances efficiency and compliance accuracy.

- Continuous operational discipline is key to sustained security posture beyond just passing audits.

A failed audit finding surfacing three weeks before a major contract close is not a hypothetical. It happens to well-resourced tech and finance teams every quarter, and the fallout goes beyond delayed deals. Regulatory penalties, eroded client trust, and scrambled remediation sprints are the real cost of an unstructured audit process. The good news is that a repeatable, well-documented approach cuts those surprises dramatically. This guide walks security and compliance professionals through every phase of the information security audit lifecycle, from scoping to post-audit monitoring, with practical techniques, collaboration shortcuts, and the metrics that actually matter.

Table of Contents

- Understanding the information security audit lifecycle

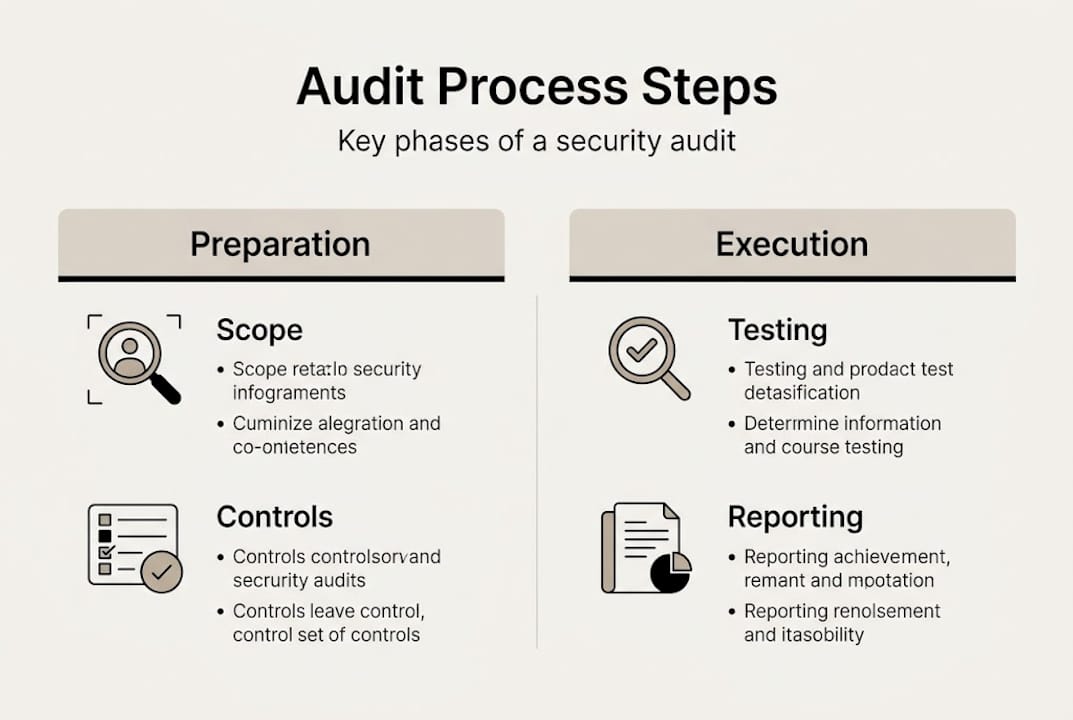

- Preparing for your audit: Scope, controls, and evidence readiness

- Executing the audit: Techniques and tools for testing controls

- Reporting, remediation, and metrics: Measuring and closing the audit

- Common pitfalls and expert shortcuts for smoother audits

- A security leader's perspective: Operational discipline is your true audit outcome

- Streamline your next audit with advanced automation

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Follow structured steps | A disciplined audit process ensures consistency, evidence quality, and repeatable success. |

| Prep controls and evidence | Careful preparation, control mapping, and pre-collected samples speed up your audit. |

| Leverage automation and collaboration | Modern tools minimize manual effort and support cross-team effectiveness in large organizations. |

| Track and close gaps | Timely, actionable metrics and remediation plans drive real improvement and trust. |

| Continuous improvement wins | Move beyond checkbox compliance to a culture of ongoing operational discipline. |

Understanding the information security audit lifecycle

Every mature audit program starts with a shared understanding of the process itself. Without that foundation, teams end up reinventing the wheel each cycle, burning time and creating inconsistent results.

The ISO 27001 audit process follows a structured lifecycle that includes planning, preparation, execution (document review, interviews, and controls testing), reporting findings such as nonconformities and opportunities for improvement, corrective actions, and follow-up closure. That sequence is not arbitrary. Each phase feeds the next, and skipping one typically means discovering its absence at the worst possible moment.

For teams managing IT environments more broadly, the picture is similarly structured. IT security audits include nine key steps: defining scope and objectives, building an asset inventory, reviewing policies, conducting a risk assessment, running vulnerability scans, performing penetration testing, checking compliance, reporting and remediating, and then establishing ongoing monitoring. Nine steps sounds like a lot, but most of the work is front-loaded in planning.

Here is how the major frameworks compare at a high level:

| Framework | Core phases | Primary output |

|---|---|---|

| ISO 27001 | Plan, Prepare, Audit, Report, Close | Corrective action plan |

| SOC 2 | Scoping, Readiness, Testing, Reporting | Type I or Type II report |

| NIST RMF | Categorize, Select, Implement, Assess, Authorize, Monitor | System Authorization (ATO) |

The key difference between these frameworks is not the phases themselves but the evidence standards and the audience for the final report. ISO 27001 drives internal discipline. SOC 2 builds external trust with clients. NIST RMF satisfies federal authorization bodies. Knowing which framework governs your audit shapes every decision downstream.

Practical execution across all three frameworks shares common activities:

- Document review: Policies, procedures, and prior audit records

- Interviews: Control owners, system administrators, and third-party contacts

- Technical testing: Vulnerability scans, configuration reviews, and penetration tests

- Evidence sampling: Access logs, change records, and incident reports

For a deeper look at how these frameworks align operationally, security review best practices and ISO 27001 vs SOC 2 comparisons are worth reviewing before you finalize your audit plan.

Preparing for your audit: Scope, controls, and evidence readiness

With the lifecycle framework in mind, successful audits start with thoughtful preparation. Scope definition is where most teams either set themselves up for a smooth audit or create months of pain.

For tech and finance organizations, aligning ISO, NIST, SOC 2, and PCI controls reduces audit fatigue significantly. The strategy is to map overlapping controls once and reuse that mapping across frameworks. A vendor management control that satisfies SOC 2's availability criteria likely also maps to ISO 27001's Annex A and NIST SP 800-53. Documenting that mapping upfront means your team answers the same question once, not three times.

Evidence readiness is where preparation becomes concrete. SOC 2 audits test Trust Services Criteria through evidence collection covering access changes, incidents, and vendor assessments over a 3 to 12 month period. That window matters. If you only start collecting evidence when the auditor arrives, you are already behind.

Here is what a solid evidence readiness checklist looks like:

- Access logs: User provisioning, deprovisioning, and privileged access reviews

- Incident records: Tickets, post-mortems, and resolution timelines

- Change management logs: Approved changes with timestamps and approver details

- Vendor risk reviews: Third-party assessments, contracts, and SLA documentation

- Configuration baselines: Hardening standards and deviation records

Shadow IT and third-party risk management deserve special attention during scoping. Finance and tech organizations often have dozens of SaaS tools operating outside formal procurement. If those tools touch regulated data, they belong in scope. Discovering them mid-audit is far more disruptive than finding them during preparation.

Pro Tip: Build a living evidence repository that control owners update monthly, not just before audit season. A shared folder with timestamped, read-only logs dramatically reduces last-minute scrambles and strengthens evidence integrity.

Executing the audit: Techniques and tools for testing controls

Once preparations are complete, it is time to execute your audit with precision and efficiency. Execution is where plans meet reality, and the gap between the two is usually where findings live.

The NIST RMF Assess step uses SP 800-53A assessment procedures that cover three methods: examination of documents, interviews with personnel, and technical testing including scans and penetration tests. The output is a Security Assessment Report (SAR) that feeds the Authorizing Official's authorization decision. That three-method structure is a useful template regardless of which framework you are working under.

A practical execution sequence for most teams looks like this:

- Document review first: Confirm policies and procedures are current before testing systems against them.

- Control owner interviews: Validate that documented processes reflect actual operations.

- Automated vulnerability scanning: Run credentialed scans across in-scope systems.

- Penetration testing: Targeted testing for high-risk areas identified in the risk assessment.

- Configuration review: Compare live settings against approved baselines.

- Evidence validation: Confirm collected samples match the audit period and are tamper-evident.

Automation is a force multiplier here. Automated Security Control Assessment (ASCA) and GRC platforms enable continuous monitoring and evidence collection, reducing the manual audit burden in large organizations significantly. Instead of a point-in-time snapshot, you get a rolling view of control health.

Here is a quick comparison of manual versus automated execution approaches:

| Dimension | Manual approach | Automated approach |

|---|---|---|

| Evidence collection | Periodic, labor-intensive | Continuous, system-generated |

| Coverage | Sample-based | Near-comprehensive |

| Audit fatigue | High | Reduced |

| Cost at scale | Increases linearly | Relatively fixed |

For teams working toward SOC 2 reporting, understanding what auditors expect in a sample report helps calibrate your execution. And if you are selecting an external auditor, reviewing what SOC 2 auditors look for in SaaS environments will sharpen your preparation.

Pro Tip: Assign a single evidence owner per control domain, not per control. One person responsible for all access management evidence is more accountable and consistent than five people each handling one access log.

Reporting, remediation, and metrics: Measuring and closing the audit

With testing complete, the crucial next step is making results actionable and ensuring issues do not return. A well-structured audit report is not just a compliance artifact. It is a roadmap for operational improvement.

Effective audit reports organize findings by severity, map each finding to a specific control and framework requirement, and assign a named owner with a remediation deadline. Vague findings like "access controls need improvement" are not actionable. Specific findings like "17 accounts retain access to the payments system 30 days after offboarding" drive real change.

Metrics are what separate teams that improve from teams that repeat the same audit findings year after year. Key cybersecurity benchmarks include Mean Time to Detect (MTTD) and Mean Time to Remediate (MTTR) under 24 hours as the ideal target, patch compliance above 95%, audit completion at 100%, and vulnerability recurrence below 5%. Track these across cycles and you will see real trends.

| Metric | Target benchmark | Why it matters |

|---|---|---|

| MTTD | Under 24 hours | Faster detection limits breach impact |

| MTTR | Under 24 hours | Faster remediation reduces exposure window |

| Patch compliance | Above 95% | Unpatched systems are the most common attack vector |

| Vuln recurrence | Below 5% | Recurrence signals systemic, not one-off, failures |

Edge cases deserve explicit attention in your report. Shadow IT discovery, third-party risks, and configuration drift are among the most common surprises, and 68% of intrusions in 2026 involve stolen NTLM or Kerberos credentials, which often trace back to unmanaged systems. Your report should include a section on out-of-scope risks discovered during the audit.

For ongoing third-party risk management, post-audit monitoring should include vendor reassessments triggered by contract renewals or security incidents, not just annual reviews.

Common pitfalls and expert shortcuts for smoother audits

Even the best-run audits face recurring stumbling blocks. Knowing where teams consistently fail is half the battle.

The most common pitfalls in mid to large organizations include:

- Weak evidence chains: Logs that are editable, missing timestamps, or outside the audit period

- Misaligned teams: Security and compliance teams working from different control lists

- Third-party blind spots: Vendors with access to critical systems but no formal assessment on file

- Cloud configuration drift: Infrastructure that passes a point-in-time scan but drifts between audits

- Over-reliance on policy documents: Policies that describe ideal behavior but do not reflect what actually happens

Expert shortcuts that consistently pay off include mapping controls once across all applicable frameworks, using shared playbooks that both security and compliance teams maintain together, and storing all evidence in timestamped, read-only repositories from day one.

The CISA FISMA maturity model reinforces a critical insight: audits prove operational discipline, not just policy compliance. Organizations that move from ad hoc to repeatable and eventually to optimized processes consistently outperform those treating audits as annual fire drills. Continuous monitoring beats point-in-time assessment every time.

The SOC 2 compliance guide for tech and finance teams offers additional detail on building that repeatable process within a specific regulatory context.

Pro Tip: Run a quarterly "mini-audit" of your top 10 highest-risk controls. It takes a fraction of the time of a full audit and surfaces drift before it becomes a finding.

A security leader's perspective: Operational discipline is your true audit outcome

Here is something most audit guides will not tell you directly: the certificate or report is not the point. The point is whether your organization actually operates securely between audits.

Too many teams treat compliance as a performance. They clean up evidence repositories in the weeks before an auditor arrives, run access reviews they have been deferring for months, and document processes that have not been followed in practice. Auditors who have been doing this work for years can spot the difference between a team that lives its controls and one that stages them.

Standards alignment matters, but it only delivers real trust when it reflects genuine operational rigor. The organizations that build lasting client and regulatory trust are the ones where audit preparation is mostly just documentation, because the controls are already running. That shift from reactive to embedded takes time, but it starts with treating every audit finding as a process failure worth fixing permanently, not just a checkbox to close before the next cycle.

Streamline your next audit with advanced automation

You now have a proven framework for running tighter, faster, and more collaborative security audits. The next step is putting the right tools behind that process.

Skypher's security questionnaire automation tool is built specifically for tech and finance teams that need to move through security reviews without sacrificing accuracy. With AI-powered evidence management, real-time collaboration, and integrations across 40-plus TPRM platforms, Skypher reduces the manual overhead that slows audit cycles down. Whether you are responding to a vendor questionnaire or preparing for a full SOC 2 audit, the Skypher platform gives your team the speed and structure to stay ahead of every review.

Frequently asked questions

How often should mid to large organizations conduct information security audits?

IT security audits should be conducted every 6 to 12 months for most tech and finance organizations, with frequency adjusted based on industry risk profile and specific regulatory requirements.

What are the most important metrics to track during a security audit?

The most critical metrics are MTTD and MTTR (both ideally under 24 hours), patch compliance above 95%, 100% audit completion, and vulnerability recurrence below 5%.

How can we reduce audit fatigue and improve collaboration?

Aligning controls across ISO, NIST, SOC 2, and PCI eliminates duplicate work, while ASCA and GRC automation handles continuous evidence collection so teams are not rebuilding the same artifacts each cycle.

What are common areas where organizations fail audits?

Typical failures cluster around vendor management gaps, incomplete vulnerability management, missing access reviews, and evidence integrity issues such as editable or out-of-period logs.

Which audit evidence holds up best under scrutiny?

Timestamped, read-only system logs, independent third-party assessments, and direct samples of access changes and incident records are consistently the most defensible forms of audit evidence.