TL;DR:

- Modern automation integrates security reviews into continuous workflows, enabling faster and more scalable validation.

- Context-aware automation reduces alert fatigue by prioritizing findings based on exploitability and business impact.

- Human oversight remains essential for ambiguous, high-impact, or regulatory-sensitive findings, ensuring decision accuracy.

Automating security reviews sounds straightforward until your team is still drowning in backlogs six months after deploying three new scanning tools. The real problem is not a shortage of technology. It's that most organizations treat automation as a faster version of what they already do manually, more scans, more alerts, more noise. Modern compliance and risk pressures have made that approach a liability. This guide breaks down why context-aware, workflow-integrated automation is fundamentally different, what pitfalls to avoid, and how finance and tech teams can build review processes that are both defensible and sustainable.

Table of Contents

- The evolution: From manual reviews to context-aware automation

- Why context and correlation matter: Beyond just more scanning

- Human-in-the-loop: Where automation stops (and experts step in)

- Automation for compliance and auditability: Real-world gains in finance and tech

- Why smart automation beats 'just more tools': Hard-won lessons

- Ready to automate your security reviews?

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Continuous integration | Automated security reviews shift validation from occasional checks to continuous, workflow-embedded assurance. |

| Context-driven efficiency | Correlating findings with business context increases risk reduction and reduces alert fatigue. |

| Human oversight critical | Retaining expert signoff for key decisions prevents errors from ambiguous or high-impact findings. |

| Compliance advantage | Automation supplies ongoing, defensible evidence, enabling quicker and more reliable audits. |

The evolution: From manual reviews to context-aware automation

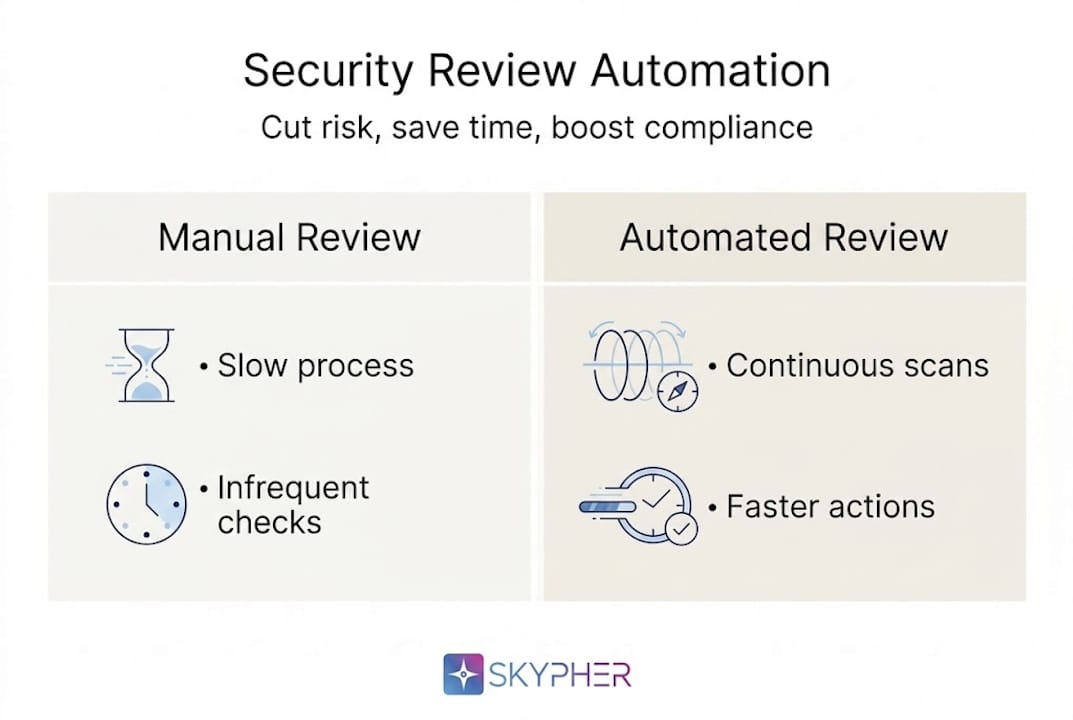

Traditional security reviews were built for a slower era. A team would schedule a point-in-time assessment, collect evidence, review findings manually, and then push a report upstream. By the time stakeholders saw the results, the codebase or infrastructure had already changed. That lag is not just inefficient. It creates real exposure windows that attackers can exploit.

Modern automation changes the fundamental rhythm of review. Instead of periodic checkpoints, security reviews become continuous events woven into DevSecOps and CI/CD pipelines. Every pull request, every deployment, every configuration change triggers a validation step. The methodology shifts from point-in-time manual checks to continuous, workflow-integrated validation, which is the core principle behind effective automation.

Here is a direct comparison of where the two approaches land:

| Dimension | Manual review | Automated review |

|---|---|---|

| Frequency | Quarterly or annual | Continuous, per change |

| Time to finding | Days to weeks | Minutes to hours |

| Evidence packaging | Manual, inconsistent | Automated, audit-ready |

| Bottleneck point | Reviewer availability | Tool configuration |

| Scalability | Low | High |

| Compliance readiness | Reactive | Proactive |

The drivers pushing teams toward automated compliance are not abstract. Risk management frameworks demand more frequent validation. Auditors now expect real-time evidence. Workforce constraints mean security teams cannot manually review everything at scale.

When done right, automation delivers measurable outcomes:

- Shorter validation cycles across code, infrastructure, and third-party components

- Fewer review bottlenecks caused by reviewer availability or scheduling

- Faster remediation because findings surface earlier in the development lifecycle

- Consistent evidence trails that satisfy both internal and external audit requirements

- Better cross-team collaboration between security, engineering, and compliance functions

The shift is not just operational. It is strategic. Teams that automate effectively stop being a gate and start being a guardrail.

Why context and correlation matter: Beyond just more scanning

Here is where many automation programs quietly fail. Security tools multiply, dashboards fill with findings, and engineers start ignoring alerts because most of them are noise. That pattern has a name: alert fatigue. And it is one of the most damaging outcomes of poorly designed automation.

Automation is most effective when it correlates findings with context, exploitability, runtime exposure, and actual business impact. Without that layer, you are just generating more work dressed up as efficiency.

Consider two organizations at similar scale. The first deploys five scanning tools and routes every finding directly to an engineering backlog. Within three months, the backlog holds thousands of items, developers treat the security queue as a low-priority distraction, and mean time to remediation (MTTR, the average time it takes to fix a confirmed issue) stretches to weeks. The second organization instruments the same tools but adds a correlation layer that tags findings by exploitability score, maps them to business-critical assets, and filters runtime exposure. Their backlog is a fraction of the size and MTTR drops significantly.

| Metric | Detection-heavy automation | Context-driven automation |

|---|---|---|

| Alert volume | Very high | Filtered and prioritized |

| False positive rate | High | Low |

| MTTR | Weeks | Days or hours |

| Developer trust | Erodes quickly | Builds over time |

| Security team load | Increases | Manageable |

Implementing automation as 'more scanning' without context increases alert fatigue and erodes developer trust, which is exactly the opposite of what security teams need.

"The question is not how many findings you can surface. It is whether your team can act on them. Context is what turns data into decisions." — Application security practitioner

Learn more about how AI transforms security reviews to prioritize what actually matters rather than generating raw volumes of output.

Pro Tip: Bake context prioritization into your automation stack from day one. Retrofitting it later means re-engineering workflows that engineers have already adapted to, which is a painful and politically difficult process.

The security review best practices that separate effective programs from struggling ones almost always come down to this: correlation over coverage.

Human-in-the-loop: Where automation stops (and experts step in)

Automation handles volume exceptionally well. It does not handle nuance.

When a scanner flags an access control issue on a system that happens to be under active legal review, the right response is not automatic ticket creation. It requires a human who understands the business context, the regulatory exposure, and the downstream consequences of any action taken. That kind of judgment is not something a rule engine produces reliably.

Edge cases and expert nuance are the reason human oversight gates remain essential, even in highly automated environments. The goal is not to eliminate human involvement but to redirect it toward decisions that actually require it.

Knowing when to bring in human expertise is itself a design decision. The following situations consistently require human review:

- Ambiguous risk findings where exploitability depends on undocumented system behavior

- Legal or regulatory exposure linked to a specific finding or affected asset

- Business-critical system changes that carry operational or reputational risk

- Novel vulnerability classes that fall outside the automation tool's trained parameters

- High-impact findings that could trigger breach notification requirements

Imagine a scenario where an automated system misclassifies a finding as low priority because it does not recognize the asset's role in customer data processing. A human reviewer would catch that. Without a human gate, the finding sits unaddressed while the exposure compounds. That is how avoidable incidents become regulatory events.

Pro Tip: Design your process with automated triage as the first layer and mandatory human signoff for any finding tagged as critical or novel. The automation does the heavy lifting, but the human closes the loop where it counts.

The best automation workflows blend AI pre-triage with policy-driven human gates. Explore automated review cycles that are designed with this balance built in, so neither side of the equation is left doing work it is not suited for.

Automation for compliance and auditability: Real-world gains in finance and tech

For regulated industries, automation is not just a productivity tool. It is a compliance infrastructure decision.

Financial services firms operating under SOC 2, PCI DSS, or ISO 27001 frameworks cannot rely on annual assessments to demonstrate ongoing control effectiveness. Auditors increasingly expect continuous evidence, not snapshots. Automation makes that expectation achievable at scale. Fortune 1000 financial organizations have moved to automated security validation specifically to meet these regulatory demands and strengthen cyber resilience.

Here is how the process typically flows in a mature automated compliance program:

- Evidence collection runs continuously, capturing logs, configurations, and access records without manual intervention

- Continuous validation checks those evidence artifacts against defined control requirements on an ongoing basis

- Real-time reporting surfaces compliance gaps as they appear, not weeks later during a scheduled review

- Faster audits become possible because evidence packages are pre-built, organized, and reviewable on demand

"We used to spend three weeks preparing for an audit. Now the evidence is always current and the process takes days." — Security operations lead, financial services firm

Supply chain and third-party risk management benefit just as directly. When vendor security validations are automated, your team can assess more vendors more frequently without adding headcount. That matters enormously in a threat environment where compliance automation has become a baseline expectation for enterprise partners.

The operational drag that compliance creates for security teams, the manual evidence requests, the status tracking, the follow-up emails, collapses significantly when automation handles the routine work. That frees your experts to focus on what efficient security reviews are actually designed to produce: risk reduction, not paperwork.

Why smart automation beats 'just more tools': Hard-won lessons

Here is an uncomfortable observation from real automation deployments: most programs that fail do not fail because of technology. They fail because of integration gaps, workflow mismatches, and a breakdown of trust between engineering, security, and compliance teams.

When security teams add tools without co-designing workflows with the engineers who have to act on findings, the result is predictable. Developers see the alerts as someone else's problem. Security teams see the backlog as engineering resistance. Both sides are partially right, and neither side wins.

What actually works is starting with the pain point, not the tool. Which part of your review cycle creates the most friction? Start there. Automate that specific step. Measure the outcome. Then move to the next bottleneck. Treating automation as a continuous improvement effort rather than a one-time deployment changes everything about how teams adopt and sustain it.

The other piece most teams miss is evidence packaging. Automation that surfaces findings but does not package them for audit consumption creates a second manual workload downstream. Building audit-ready output into the automation design from the start is what separates programs that scale from those that plateau. Explore how efficient security reviews are structured to deliver that kind of end-to-end value, not just detection coverage.

Bridging security, engineering, and audit requires shared language and shared outcomes. When all three functions can see the same evidence in real time, trust builds fast.

Ready to automate your security reviews?

If the principles in this guide resonate, the next step is finding a platform built to operationalize them. Skypher's AI-driven tools are designed specifically for security and compliance teams in tech and finance that need to move faster without sacrificing accuracy or defensibility.

With security questionnaire automation that handles evidence collection, context correlation, and audit-ready packaging, Skypher removes the manual overhead that slows most programs down. The AI recommendation engine helps your team prioritize findings intelligently, integrates with your existing stack, and keeps compliance evidence current without manual intervention. Purpose-built for the workflows that matter most to regulated organizations.

Frequently asked questions

What are the primary benefits of automating security reviews?

Automation delivers continuous validation and efficiency, reduces manual bottlenecks, and improves audit readiness while freeing security experts for high-impact tasks that require judgment rather than repetition.

How does automation help with compliance in regulated industries?

Automation provides ongoing evidence and reporting, making compliance checks faster, more reliable, and defensible in audits. Continuous validation replaced periodic audits in leading financial organizations, delivering real-time evidence that satisfies modern regulatory expectations.

Why can't security reviews be fully automated?

Not all findings fit preset rules. Expert judgment remains essential for ambiguous risks, legal exposure, and strategic decisions that depend on business context automation tools cannot interpret reliably.

How does context-aware automation reduce alert fatigue?

By correlating findings with exploitability, business impact, and runtime exposure, context-aware automation helps teams prioritize real risks and filter out noise. Context-based prioritization reduces alert backlogs and restores developer trust in the security process.