TL;DR:

- Effective security reviews assess vendor cybersecurity posture to reduce third-party risks and ensure compliance.

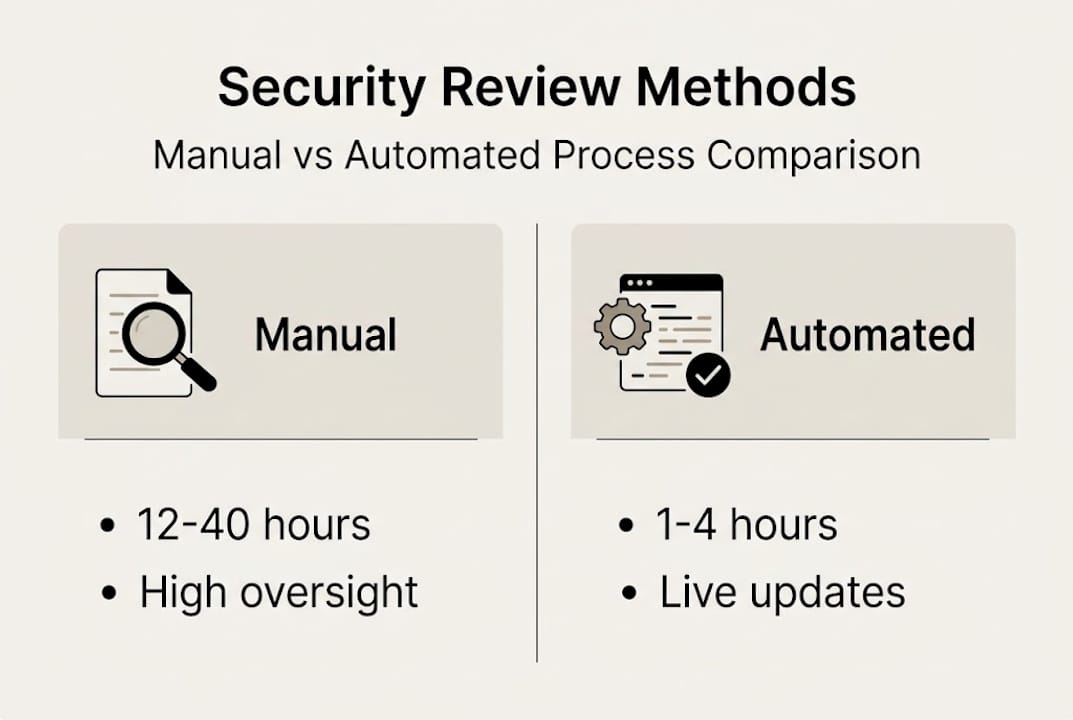

- Automation significantly shortens review time from hours to minutes, enabling continuous monitoring.

- Moving to ongoing validation and live evidence improves security posture and minimizes outdated or false information.

Security reviews are often dismissed as tedious paperwork, but that framing is dangerously wrong. 54% of organizations have experienced third-party breaches, and weak or outdated review practices are a leading cause. For compliance officers and risk managers at tech and finance firms, the review process is one of the most direct levers you have for reducing vendor-related exposure. This article breaks down what effective security reviews look like, how automation changes the game, where most teams fall short, and what you can do right now to build a faster, more defensible process.

Table of Contents

- What are security reviews and why do they matter?

- How security reviews are conducted: From manual to automated

- Key challenges in security reviews: Accuracy, complexity, and scale

- Maximizing security review impact: Continuous monitoring and knowledge management

- Efficiency wins: Quantifiable benefits of modern security reviews

- The uncomfortable truth: Why most security reviews fail (and how to fix them)

- Take your security reviews to the next level

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Security reviews cut real risks | Thorough, well-designed security reviews help prevent costly third-party breaches. |

| Automation slashes response time | AI-enabled tools can reduce the time to complete security questionnaires from 40 hours to as little as 1-4 hours. |

| Continuous validation is crucial | Ongoing, evidence-based verification in security reviews ensures that responses always reflect real-time controls. |

| Optimize for scale and audit | Pre-built trust centers and knowledge management systems streamline compliance for large organizations. |

What are security reviews and why do they matter?

A security review is a structured, organized assessment of a third party's cybersecurity posture and risk profile. You're essentially asking: does this vendor, partner, or supplier meet the security standards your organization requires? The process typically involves questionnaires, evidence requests, and documentation reviews aligned to recognized frameworks.

Common frameworks referenced in security reviews include:

- SOC 2 for service organization controls

- ISO 27001 for information security management

- SIG (Standardized Information Gathering) for detailed vendor risk profiling

- CAIQ (Consensus Assessments Initiative Questionnaire) for cloud security

These aren't arbitrary checklists. Security reviews are a core process for evaluating vendor security postures and complying with frameworks like SOC 2 and ISO 27001. They protect your organization from inheriting a vendor's vulnerabilities and help you satisfy regulatory obligations before an auditor asks.

"A security review is not just about checking boxes. It's about understanding whether your vendors can actually protect the data and systems they touch."

The business value is real. Effective reviews reduce third-party risk, build trust with clients and regulators, and create documented proof of due diligence. Yet many teams still treat them as a compliance formality rather than a risk reduction tool. That mindset is exactly where exposure starts. For a sharper look at how to run these well, security review best practices offer a practical starting point.

One persistent misconception is that thorough reviews slow business down. In reality, a poorly run review that misses a critical vendor gap costs far more in breach response, regulatory fines, and reputational damage than the hours spent doing it right.

How security reviews are conducted: From manual to automated

The traditional security review process follows a predictable, labor-heavy pattern. Your team sends a questionnaire, the vendor fills it out manually, you request missing evidence, they respond, and you reconcile everything before reaching a conclusion. Repeat this across dozens of vendors and you have a serious capacity problem.

Here's how a typical manual review unfolds step by step:

- Identify the vendor and applicable frameworks

- Send the appropriate questionnaire (SIG, CAIQ, custom)

- Collect and organize vendor responses

- Request supporting evidence and documentation

- Validate responses against policies and controls

- Score, document, and communicate findings

Manual security questionnaires take 12 to 40 hours to complete, while automation reduces this to 1 to 4 hours. That's not a marginal improvement. It's a structural shift in how your team spends its time.

Pro Tip: Before adopting any automation tool, audit your existing knowledge base. AI tools are only as accurate as the documentation they draw from. Clean, current policies feed better outputs.

| Method | Average completion time | Consistency | Human oversight needed |

|---|---|---|---|

| Manual | 12 to 40 hours | Variable | High |

| Semi-automated | 4 to 8 hours | Moderate | Medium |

| Fully automated | 1 to 4 hours | High | Low to medium |

AI-driven questionnaire automation works by pulling pre-approved answers from a validated knowledge base, mapping them to incoming questions, and flagging anything that needs human review. The result is faster turnaround without sacrificing accuracy, as long as the underlying content is maintained. For teams new to this approach, AI essentials for security questionnaires covers the foundational concepts clearly.

Key challenges in security reviews: Accuracy, complexity, and scale

Even with automation in place, compliance teams run into serious obstacles. The challenges aren't just technical. They're organizational and structural.

The most common pain points include:

- Large, custom questionnaires that don't map cleanly to your existing answers

- Portal-based formats on platforms like OneTrust or ServiceNow that require manual data entry

- Stale documentation where policies have changed but answers haven't been updated

- Inconsistent ownership where no single team member is accountable for accuracy

- Automation errors that propagate incorrect answers across multiple submissions

Edge cases like custom questionnaires with over 300 questions and portal-based formats create serious review hurdles. These aren't rare exceptions. In enterprise environments, they're the norm.

| Challenge | Streamlined approach | Exhaustive approach |

|---|---|---|

| Speed | Fast, risk of gaps | Slow, thorough |

| Accuracy | Depends on knowledge base quality | Higher if well-documented |

| Scalability | Strong | Limited |

| Audit defensibility | Moderate | High |

The debate between streamlined and exhaustive reviews is real. Streamlined reviews move faster but risk missing nuance. Exhaustive reviews catch more but create bottlenecks. The answer isn't to pick one. It's to use assessment validation techniques to prioritize depth where risk is highest and speed where it isn't. Teams handling complex reviews with AI are finding that smart triage, not brute-force effort, is what scales.

Documentation lag is the hidden killer here. If your AI tool is pulling from a policy document that's 18 months old, every answer it generates is potentially wrong. Validation workflows and scheduled content reviews are not optional. They're the foundation of trustworthy automation.

Maximizing security review impact: Continuous monitoring and knowledge management

Point-in-time reviews have a fundamental flaw: they tell you what a vendor's security looked like when they filled out the questionnaire, not what it looks like today. For high-risk vendors, that gap can be dangerous.

Forward-thinking compliance teams are moving toward continuous monitoring, where controls are validated against live evidence rather than static documents. Continuous validation with evidence linked to live controls prevents automated lying and supports real-time compliance. That phrase, automated lying, refers to systems that generate confident answers based on outdated or incorrect data.

Practical steps for building a continuous, defensible review process:

- Link questionnaire answers directly to live policy documents and control evidence

- Set automated alerts when source documents are updated or expire

- Use AI confidence scores to flag answers that need human review before submission

- Maintain a Trust Center that pre-answers common questionnaire requests at scale

- Schedule quarterly knowledge base audits to catch documentation drift

Pro Tip: A Trust Center isn't just a convenience feature. It's a proactive risk management tool. When prospects and partners can self-serve security documentation, your team spends less time on repetitive requests and more time on high-risk reviews.

AI solutions and always-on trust centers are recommended for scalable third-party risk management. The combination of live evidence, AI-assisted scoring, and a public or gated Trust Center creates a review process that's both efficient and audit-ready. Automated compliance tools and AI for continuous monitoring are making this model accessible even for teams without large dedicated resources.

Efficiency wins: Quantifiable benefits of modern security reviews

The numbers make a compelling case for modernizing your review process. Manual reviews can consume up to 23 hours per week per vendor relationship, a figure that compounds quickly across a large vendor portfolio.

Key stat: Automation yields 70 to 90% time savings, with 75 to 90% auto-completion rates. Organizations using technology surface risks significantly earlier than those relying on manual processes.

| Metric | Manual process | Automated process |

|---|---|---|

| Time per questionnaire | 12 to 40 hours | 1 to 4 hours |

| Weekly vendor review load | Up to 23 hours | 3 to 6 hours |

| Auto-completion rate | 0% | 75 to 90% |

| Risk identification speed | Weeks | Days or hours |

86% of organizations using technology in their review process report faster risk identification. That's not just an efficiency gain. It's a direct reduction in the window of exposure between when a vendor risk emerges and when your team acts on it.

Additional benefits compliance teams report include:

- Faster contract cycles because security reviews no longer bottleneck procurement

- Improved regulatory standing from consistent, documented review trails

- Reduced team burnout from eliminating repetitive manual work

- Earlier remediation because risks are flagged before they escalate

The benefits of compliance automation extend well beyond time savings. When your team isn't buried in questionnaire responses, they can focus on the vendor relationships that actually carry the most risk. Pairing automation with the right information security tools for compliance creates a compounding advantage over time.

The uncomfortable truth: Why most security reviews fail (and how to fix them)

Here's what we've seen repeatedly: most security review programs fail not because teams lack effort, but because they're built on the wrong foundation. The entire process is oriented around producing a compliant-looking artifact rather than generating genuine risk insight.

Conventional wisdom has put too much emphasis on periodic, exhaustive reviews, missing the shift to ongoing validation with provable evidence. Teams spend weeks compiling responses for an annual review, then file the results and move on. Nothing is monitored. Nothing is updated. The next review starts from scratch.

The fix isn't just adding automation. It's changing what you're trying to achieve. Compliance for audit purposes and compliance for actual risk reduction are not the same thing. The first produces documentation. The second produces security. Rethinking compliance with AI means designing your review process around live evidence and continuous assurance, not annual snapshots.

Automation must be paired with verification. An AI tool that confidently generates answers from stale policies is worse than a manual process, because it creates false confidence at scale. The teams that get this right treat their knowledge base as a living system, not a static archive.

Take your security reviews to the next level

If your team is still spending dozens of hours per week on security questionnaires, there's a faster, more accurate path available. Skypher is built specifically for compliance officers and risk managers who need to handle high volumes of security reviews without sacrificing accuracy or defensibility.

Skypher's platform combines AI-driven questionnaire automation with a customizable Trust Center, real-time collaboration tools, and integrations with over 40 TPRM platforms including OneTrust, ServiceNow, Slack, and Microsoft Teams. You can answer up to 200 questions in under a minute, support multiple products and entities, and maintain a live knowledge base that keeps your answers accurate as your organization evolves. If you're ready to move from reactive to proactive security reviews, Skypher gives your team the infrastructure to do it at scale.

Frequently asked questions

What is a security review in risk management?

A security review is a structured assessment of a third party's cybersecurity practices, usually via standardized questionnaires, to ensure regulatory compliance and manage vendor-related risks. Security reviews assess vendor postures for frameworks like SOC 2 and ISO 27001.

How much time can automation save on security questionnaire response?

Automation reduces questionnaire time by 70 to 90%, bringing completion from 12 to 40 hours down to just 1 to 4 hours per request, freeing your team for higher-value risk analysis.

Why are continuous security reviews better than point-in-time assessments?

Continuous reviews provide real-time evidence, reduce the risk of stale answers, and improve defensibility for audits. Continuous validation linked to live controls prevents automated lying and supports genuine compliance assurance.

What frameworks are referenced in security reviews?

Common frameworks include SOC 2, ISO 27001, SOX, SIG, and CAIQ. Security reviews assess compliance with these standards, and the right framework depends on your industry, regulatory environment, and vendor risk tier.