TL;DR:

- Security questionnaire accuracy is crucial to prevent trust issues, legal risks, and disqualification.

- Establishing proper foundations like policy inventory and evidence library improves response reliability.

- AI-driven workflows significantly enhance accuracy, reduce time, and support continuous verification.

Security questionnaire accuracy is one of the most quietly damaging problems in enterprise risk management. When your responses are rushed, inconsistent, or built on stale evidence, you're not just wasting time. You're exposing your organization to vendor trust issues, compliance failures, and potential breach liability. The problem is widespread: only 34% of TPRM professionals trust the questionnaire responses they receive. That number should concern anyone responsible for third-party risk. This guide walks you through a practical, step-by-step approach to improving accuracy at every stage of the process.

Table of Contents

- Why security questionnaire accuracy matters

- What you need: Foundations for accurate responses

- Step-by-step: Completing questionnaires with maximum accuracy

- Verification and continuous improvement

- The uncomfortable truth: Why answers alone aren't enough

- Take the next step: Smarter questionnaire accuracy with Skypher

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Accuracy requires process | Effective responses need clear roles, the right resources, and defined review procedures. |

| AI beats manual methods | AI-driven automation greatly improves accuracy—up to 96% versus 75% for traditional methods. |

| Verification is essential | Post-response reviews, audits, and continuous monitoring ensure lasting accuracy and trust. |

| Questionnaires aren't enough | Relying on answers alone can create a false sense of security; integrate monitoring and independent checks. |

| Efficiency reduces error | Streamlined workflows and centralized tools cut manual mistakes and speed up completion. |

Why security questionnaire accuracy matters

Let's be direct: a wrong answer on a security questionnaire is not just a paperwork problem. It can signal false assurance to a potential client or partner, create legal exposure if a breach later reveals the claim was inaccurate, or disqualify your organization from a deal entirely when inconsistencies surface during review.

The accuracy problem is structural, not just behavioral. Security teams are often stretched thin, asked to complete questionnaires under tight deadlines, and forced to rely on institutional knowledge rather than verified documentation. The result is a predictable pattern of errors:

- Copy-paste answers from previous questionnaires that no longer reflect current controls

- Vague or overclaiming language that isn't supported by evidence

- Inconsistent responses because different team members answer similar questions differently

- Outdated certifications or policy references that have since lapsed

- Missing context on answers that technically apply but require important qualifications

The time burden compounds the problem. Manual completion takes 5 to 15 hours per questionnaire, and vendors average 23 hours per week on them. That volume makes thorough review nearly impossible at scale.

The trust gap is real. When fewer than 1 in 3 risk professionals trust the answers they receive, the entire framework of questionnaire-based due diligence is undermined. Accuracy isn't just about being correct. It's about being verifiable.

For security teams focused on streamlining questionnaires without sacrificing quality, the challenge is building a process that catches errors before they leave your desk. That starts with the right foundations.

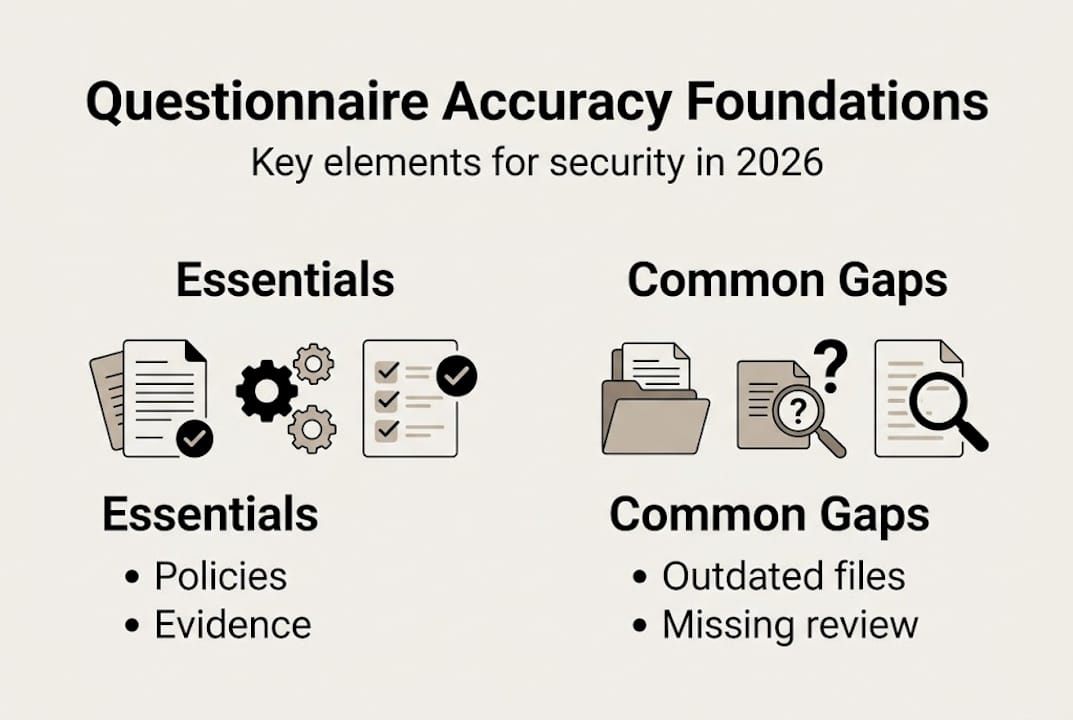

What you need: Foundations for accurate responses

Before you can answer questionnaires accurately, you need to know exactly what your organization controls, certifies, and can prove. Many accuracy failures happen upstream, before a single question is answered.

Here are the core prerequisites every security team should have in place:

- A living inventory of policies, controls, and certifications — including expiration dates and owners

- Centralized evidence storage — audit logs, penetration test results, SOC 2 reports, and other documentation in one accessible location

- Clear ownership — defined roles for who drafts answers, who reviews for technical accuracy, and who gives final approval

- Defined risk tiers — risk-based tiering tailors questionnaire depth to vendor risk, improving focus and response quality

That last point is underused. Not every questionnaire deserves the same investment. A high-risk vendor with access to customer PII (personally identifiable information) warrants deep, evidence-backed responses. A low-risk SaaS tool used by one team does not.

| Foundation element | Purpose | Common gap |

|---|---|---|

| Policy inventory | Accurate references | Outdated versions in use |

| Evidence library | Verifiable claims | Disorganized or inaccessible files |

| Ownership matrix | Clear accountability | No one assigned, delays review |

| Risk tier definitions | Proportionate effort | Flat approach wastes resources |

| Approved answer templates | Consistency | Ad hoc answers per respondent |

These aren't just organizational best practices. They are the difference between a response you can stand behind and one that unravels under scrutiny. Review the questionnaire essentials your team should be tracking to build this infrastructure efficiently.

Pro Tip: Build a shared "evidence shelf" in your document management system. Tag each piece of evidence by control category, expiration date, and applicable frameworks. When a question references encryption in transit, you pull the current TLS configuration report in seconds, not hours.

Step-by-step: Completing questionnaires with maximum accuracy

With your foundations set, here is a repeatable process that dramatically reduces error at every stage.

- Triage the questionnaire — Scan all questions before answering any. Identify which controls are covered, flag questions that need SME (subject matter expert) input, and estimate time requirements.

- Map questions to existing answers — Before writing anything new, check your approved template library. Reuse verified, reviewed answers wherever possible.

- Pull current evidence — For each response, attach or reference the most recent supporting document. Never reference a certification or report without confirming it's still valid.

- Draft with scoped language — Avoid absolute claims. "Encryption is applied to all customer data at rest in production systems" is stronger and safer than "We encrypt everything."

- Technical review — A second team member with direct control ownership should review answers in their domain before submission.

- Final approval pass — A designated approver checks for consistency across the full document, ensuring similar questions received similar answers.

- Log and archive — Save the completed questionnaire, evidence links, and reviewer sign-offs. You'll need this for future audits and repeat questionnaires.

The accuracy gap between traditional and AI-enhanced workflows is significant. AI agents achieve 90 to 96% accuracy on security questionnaires, compared to 60 to 75% for traditional tools. That's not a marginal improvement. It's the difference between responses that hold up under scrutiny and ones that don't.

| Step | Traditional manual | AI-enhanced workflow |

|---|---|---|

| Answer sourcing | Individual memory or old files | Pulls from verified, indexed knowledge base |

| Consistency | Varies by respondent | Standardized across all responses |

| Evidence linking | Manual search | Auto-suggested from document library |

| Review cycle | Multiple email chains | Inline comments with tracked changes |

| Completion time | 5 to 15 hours per questionnaire | Minutes to under 1 hour |

Explore how AI-driven automation changes the completion process end to end, and see the concrete data on automation time savings for security teams handling high questionnaire volume.

Pro Tip: Create a "pre-flight checklist" your team runs before submitting any questionnaire. Include: evidence attached and dated, no absolute claims without scope, no certifications referenced past their validity date, and a second reviewer's sign-off.

Verification and continuous improvement

Submitting the questionnaire is not the finish line. Verification after completion, and on an ongoing basis, is what separates teams with genuine accuracy from teams that just appear accurate until they're challenged.

Here is a post-completion verification checklist:

- Cross-reference check — Did similar questions get consistent answers? Compare responses across sections.

- Evidence date audit — Is every referenced document current? Flag anything older than 12 months for review.

- Scope validation — Are claims properly qualified? Watch for overly broad statements.

- Incident response check — If you claimed tested IR (incident response) procedures, confirm the last test date and outcome.

- Third-party review — For high-stakes questionnaires, consider a peer review from outside the core team.

Edge cases are where accuracy problems most often hide. Common pitfalls include overclaiming controls without specifics, using stale evidence, giving inconsistent answers across teams, and citing untested incident plans. Scoped language and consistent evidence hygiene are the practical fixes.

Questionnaires are point-in-time snapshots. They tell a reviewer what you claim was true on the day you answered. That's why verification can't stop at submission. Continuous monitoring, certifications, and regular audits are what actually build trust over time.

Set a review cadence. Quarterly reviews of your core answer library, annual full audits of your policy and evidence inventory, and immediate updates triggered by any control change or certification renewal. Use AI for challenges like detecting response drift or flagging expired evidence automatically.

Cross-team collaboration also matters here. Your security engineers know the technical truth. Your compliance team knows what the frameworks require. Your legal team knows what you can and cannot commit to in writing. All three perspectives belong in your review process.

The uncomfortable truth: Why answers alone aren't enough

Here is something most guides won't say directly: even a perfectly accurate questionnaire only proves what you claimed was true on one specific day. It does not prove your controls are working tomorrow, or next quarter, or after your next major infrastructure change.

Fewer than 1 in 3 risk professionals trust questionnaire responses. That number reflects a rational recognition that point-in-time assertions, even well-documented ones, are not the same as verified, ongoing assurance.

The organizations that actually earn trust are the ones that pair rigorous questionnaire accuracy with independent certifications, third-party audits, and continuous monitoring programs. Questionnaires become evidence of a posture, not a substitute for one. The real risk reduction happens when people, process, and technology stay aligned continuously, not just during a vendor review cycle. Explore how AI and compliance transformation supports that ongoing posture rather than just automating a one-time task.

Take the next step: Smarter questionnaire accuracy with Skypher

If your team is still managing questionnaire accuracy through spreadsheets, email chains, and manual document searches, you're carrying a risk that scales badly as volume grows.

Skypher's AI Security Questionnaire Automation platform centralizes your evidence library, applies AI-driven answer suggestions from a verified knowledge base, and supports real-time collaboration across your security and compliance teams. With integrations across 40-plus TPRM platforms and built-in accuracy controls, your responses are consistent, evidence-backed, and ready for scrutiny. Pair that with Skypher's Trust Center Platform to give reviewers on-demand access to your verified security posture. Book a personalized demo and see the difference structured accuracy makes.

Frequently asked questions

How can I quickly check the accuracy of a security questionnaire response?

Review the supporting evidence attached to each response, check for inconsistencies between similar questions, and ensure a second reviewer has signed off before submission. Scoped language and evidence hygiene are your fastest accuracy indicators.

What are the main reasons for inaccuracy in security questionnaires?

The main causes are manual error, outdated responses, poor cross-team coordination, and lack of verified evidence. Fewer than 34% of TPRM professionals trust the responses they receive, which reflects how common these gaps are.

Does using AI really improve the accuracy of these questionnaires?

Yes. Benchmarks show AI agents reach 90 to 96% accuracy on security questionnaires, significantly outperforming the 60 to 75% typical of traditional manual approaches.

How much time can automation save on security questionnaires?

Automation can reduce completion time dramatically. Questionnaire automation can cut compliance time by up to 95%, freeing your team to focus on verification and higher-value risk work.

Recommended

- Security questionnaire tips: streamline responses in 2025

- How to Answer Security Questionnaires Effectively

- How to streamline security questionnaires: 80% faster

- Security Build Guide for Streamlined Questionnaire Automation

- Securing Your Business: An In-Depth Look at Access Control Systems and How to Choose the Right One.