TL;DR:

- AI streamlines security questionnaire workflows, reducing compliance time by up to 95%.

- Effective AI use depends on strong governance, quality knowledge bases, and ongoing validation.

- Full automation is not the goal; AI should handle repetitive questions, empowering experts on complex issues.

Most compliance managers assume AI questionnaire tools simply fill in answers faster. That assumption is both partially true and dangerously incomplete. Security questionnaires are formal compliance commitments that carry real legal, regulatory, and reputational weight, especially in tech and finance. Get them wrong, and you risk failed audits, lost deals, and damaged client trust. Get them right with AI, and you can cut review cycles dramatically while improving consistency. This article unpacks what AI automation actually does in security questionnaire workflows, where it adds genuine value, where it introduces new risks, and how to govern it properly.

Table of Contents

- Understanding security questionnaires and their challenges

- How AI transforms the security questionnaire process

- Quality, accuracy, and risk: Measurement matters

- Governance, risk, and compliance: AI as part of your compliance fabric

- Limitations, continuous improvement, and the future of AI in questionnaires

- A fresh perspective: What most experts overlook about AI in security questionnaires

- Automate and secure your security questionnaire process with Skypher

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Automation boosts efficiency | AI can slash the time spent on security questionnaires, but its value depends on smart process integration. |

| Measurement is crucial | Relying on headline metrics alone can be risky, so always monitor accuracy, precision, and false positives closely. |

| Governance prevents risk | Comprehensive controls, audit trails, and compliance frameworks are essential when using AI for compliance tasks. |

| Continuous improvement | The best results come from updating AI knowledge bases and keeping humans involved in key review steps. |

Understanding security questionnaires and their challenges

Security questionnaires are not simple forms. They are structured assessments sent by customers, partners, regulators, or procurement teams to verify that your organization meets specific security, privacy, and compliance standards. In tech and finance, these documents routinely run 100 to 400 questions, covering everything from encryption protocols and access controls to incident response procedures and third-party risk management.

The manual process is brutal. A typical workflow involves routing the questionnaire to multiple subject-matter experts (SMEs), chasing responses across email threads, reconciling conflicting answers, and then formatting everything for submission. This creates several well-documented bottlenecks:

- Knowledge fragmentation: Answers live in the heads of individual engineers, legal staff, or security architects, not in a centralized, searchable system.

- SME bottlenecks: Experts are pulled away from core work to answer repetitive questions they have answered dozens of times before.

- Version control failures: Teams submit outdated or inconsistent answers because no single source of truth exists.

- Deadline pressure: Procurement timelines do not wait for internal coordination, so rushed responses increase error rates.

"Security questionnaire compliance failures can have significant operational and business impact," according to AI security assessment research, underscoring that incomplete or inaccurate responses are not just administrative problems but genuine business risks.

The consequences of mismanaged responses range from stalled contracts and failed vendor assessments to regulatory scrutiny. A single inaccurate answer about data residency or encryption standards can trigger a full re-audit. For compliance managers, the stakes are high enough that streamlined questionnaire tips have become essential reading, not optional best practices.

Automation is attractive because it attacks all of these pain points simultaneously. But attractive is not the same as risk-free, and that distinction matters enormously for the people responsible for signing off on these submissions.

How AI transforms the security questionnaire process

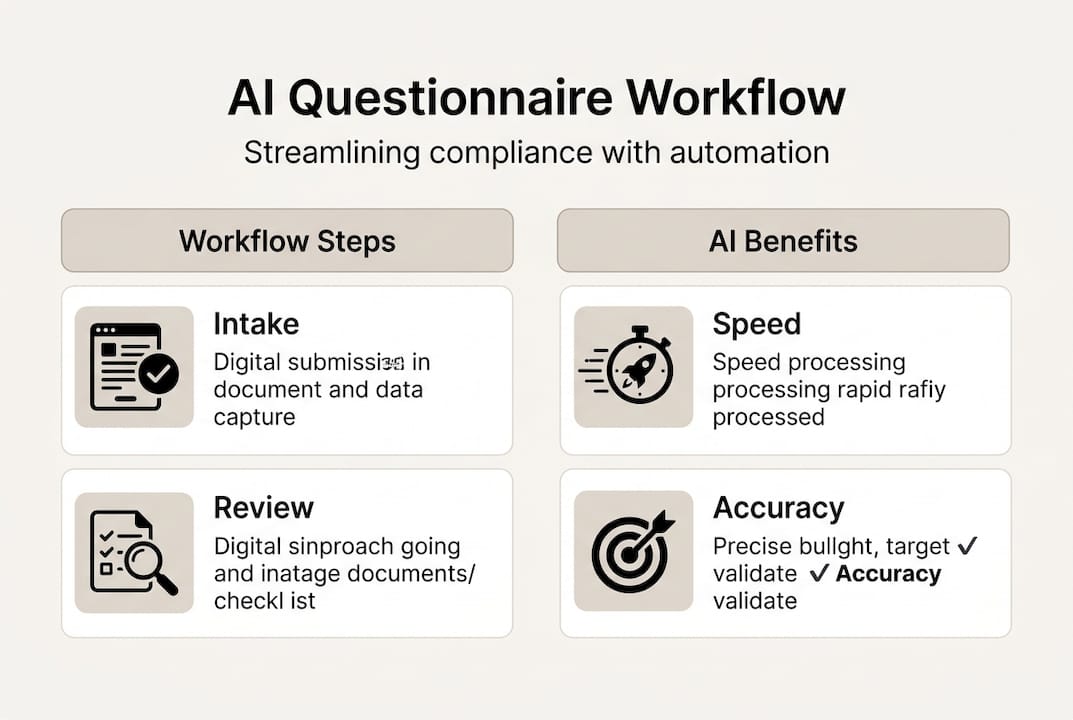

AI does not just answer questions faster. It restructures the entire workflow from intake to export. Here is how a modern AI-powered process compares to the manual alternative:

| Step | Manual process | AI-powered process |

|---|---|---|

| Intake | Email or portal download, manual routing | Auto-parse any format, instant classification |

| Response generation | SME drafts from memory or prior docs | AI searches knowledge base, suggests answers |

| Review | Full team review of every answer | SME reviews only flagged or low-confidence items |

| Evidence mapping | Manual attachment of supporting docs | Auto-linked evidence from connected repositories |

| Export | Manual formatting per portal | One-click export to required format |

The numbered workflow looks like this in practice:

- Intake and parsing: The questionnaire is uploaded or pulled directly from a portal. AI parses every question regardless of format.

- Knowledge base search: The system searches your existing approved answers, policies, and documentation to find the best match.

- Auto-fill with confidence scoring: High-confidence answers are pre-filled. Lower-confidence items are flagged for SME review.

- SME tagging and escalation: Questions outside the knowledge base are routed to the right expert automatically.

- Evidence mapping: Supporting documents from connected repositories like SharePoint or Confluence are attached to relevant answers.

- Review and approval: A compliance manager reviews the full draft, approves, and exports in the required format.

This is where AI-driven questionnaire transformation becomes tangible. Teams report that questionnaire automation cuts compliance time by 95% when properly implemented, which is not a marketing claim but a measurable operational shift for organizations with high questionnaire volume.

The AI advantages in questionnaire automation extend beyond speed. Consistency improves because the same approved language is used across all submissions. Audit trails become cleaner. And SMEs spend less time on repetitive work and more time on genuinely complex security questions.

Pro Tip: Your knowledge base is the engine. An AI tool is only as good as the content it searches. Invest time upfront in cleaning, tagging, and approving your baseline answers before you automate at scale. Revisit it quarterly.

Quality, accuracy, and risk: Measurement matters

Not all AI questionnaire tools perform equally, and this is where many compliance managers get burned. Vendors often lead with headline accuracy numbers that sound impressive in demos but fall apart under real-world conditions.

The metrics that actually matter are:

- Precision: Of the answers the AI auto-fills, how many are actually correct?

- Recall: Of all answerable questions, how many does the AI successfully address?

- False positive rate: How often does the AI confidently suggest a wrong answer?

- Evidence citation accuracy: Are the supporting documents actually relevant to the answer provided?

| Metric | Low performance | High performance | Business impact |

|---|---|---|---|

| Precision | Below 70% | Above 90% | Low precision means more SME review time, not less |

| Recall | Below 60% | Above 85% | Low recall leaves too many questions unanswered |

| False positive rate | Above 15% | Below 5% | High false positives escalate review costs and compliance risk |

| Evidence accuracy | Below 65% | Above 88% | Mislinked evidence can cause audit failures |

Benchmarks for AI questionnaire tools show that AI tool accuracy varies widely across vendors, and over-trusting headline metrics leads directly to operational risk. A tool that claims 95% accuracy in a controlled demo may perform at 72% on your actual questionnaire mix.

False positives deserve special attention. When an AI confidently fills in an incorrect answer about your data retention policy or encryption standard, and that answer passes review because a compliance manager trusted the confidence score, the result can be a submitted questionnaire with factually wrong compliance claims. That is not a minor error.

The path forward involves overcoming AI automation challenges through rigorous vendor evaluation and AI risk management in questionnaires as an ongoing practice, not a one-time purchase decision. Ask vendors for benchmark data on your specific question types, not generic accuracy claims.

Governance, risk, and compliance: AI as part of your compliance fabric

AI that drafts security questionnaire responses is not a passive tool. It is an agent making compliance assertions on your organization's behalf. That changes your risk model significantly.

AI agents that draft questionnaire responses require rigorous governance, including logging and ongoing compliance monitoring, to ensure accountability at every step.

Minimum governance controls for any AI-powered questionnaire system include:

- Role-based permissions: Control who can approve, edit, or export responses. Not every user should have submission rights.

- Tamper-evident audit trails: Every AI suggestion, human edit, and approval action should be logged with timestamps and user attribution.

- Data-use consent and residency controls: Know exactly where your questionnaire data and knowledge base content are stored and processed.

- Escalation protocols: Define clear rules for when AI answers must be escalated to a senior SME or legal reviewer.

- Framework alignment: Map your governance controls to NIST AI RMF and ISO 42001 to ensure your AI use is defensible in audits.

This is what AI compliance transformation looks like in practice: not just faster answers, but answers that are traceable, reviewable, and defensible. Organizations that treat AI questionnaire tools as compliance infrastructure rather than productivity shortcuts are the ones that perform best in third-party audits.

For a broader view of managing digital risk in AI, governance frameworks provide the structure that keeps AI outputs aligned with your actual security posture.

Pro Tip: Schedule quarterly audits of your AI output logs. Look for patterns in low-confidence answers, frequent SME overrides, and evidence mismatches. These patterns reveal gaps in your knowledge base before they become audit findings.

Limitations, continuous improvement, and the future of AI in questionnaires

Honesty matters here. Many AI questionnaire tools on the market today are closer to advanced search and templating systems than true learning agents. They do not automatically improve from corrections unless you explicitly retrain or update the knowledge base. That distinction has real operational consequences.

Practitioner experience with AI automation confirms that some solutions offer only basic search and templating, lack genuine learning, and require thoughtful design for knowledge ownership. Buying a tool is not the same as solving the problem.

Common limitations to evaluate before adoption:

- No automatic learning: Edits made by SMEs do not feed back into the model unless there is a structured feedback loop.

- Shallow workflow integration: Many tools handle response generation but do not connect to ticketing, approval, or evidence management systems.

- Governance gaps: Audit trails are incomplete or not exportable in formats required by compliance frameworks.

- Single-language or single-format constraints: Enterprise environments often need multilingual support and multi-format parsing that basic tools cannot handle.

Best practices for continuous improvement include:

- Update your knowledge base after every completed questionnaire, capturing new approved answers immediately.

- Build a formal SME feedback loop where overrides and corrections are reviewed monthly and used to improve base content.

- Track your AI acceptance rate over time. If SMEs are overriding more than 30% of AI suggestions, your knowledge base needs attention.

- Require vendors to show a roadmap for agentic workflow features and learning capabilities before committing long-term.

For teams building toward AI-driven essentials for security questionnaires, the realistic expectation is incremental improvement over months, not instant transformation on day one. Organizations that plan for this reality outperform those chasing overnight results. Exploring improving remote AI security practices also helps distributed teams maintain consistent knowledge base quality.

A fresh perspective: What most experts overlook about AI in security questionnaires

Most buyers evaluate AI questionnaire tools by asking, "How smart is the AI?" That is the wrong question. The right question is, "How well does this tool fit into our actual review and approval workflow?"

The organizations that get the most value from AI questionnaire automation are not the ones with the most sophisticated models. They are the ones that transform compliance with AI by designing clear escalation paths, maintaining rigorous knowledge bases, and empowering SMEs with fast review tools rather than trying to remove humans from the process entirely.

Full automation is not the goal. Audit-ready, defensible, efficient responses are the goal. The AI's job is to handle the 70% of questions that are repetitive and well-documented, freeing your experts to focus on the 30% that require real judgment. Teams that understand this distinction consistently outperform those chasing full automation. Test real-world scenarios with your own questionnaire samples before you buy, not vendor-curated demo data.

Automate and secure your security questionnaire process with Skypher

If this article clarified the real demands of AI-powered questionnaire automation, the next step is finding a platform built to meet them.

Skypher's AI questionnaire automation platform is designed specifically for compliance-grade environments. It combines intelligent parsing, confidence scoring, role-based permissions, and full audit trails in a single workflow. The AI recommendation engine surfaces the most accurate, approved answers from your knowledge base instantly, with integrations across 40-plus TPRM platforms, Slack, MS Teams, Confluence, SharePoint, and more. Whether you are managing 10 questionnaires a month or 200, Skypher gives your team the controls and speed that compliance actually requires. Book a demo to see it in action.

Frequently asked questions

How reliable are AI-generated security questionnaire responses?

AI-generated responses can be highly efficient, but reliability depends on strong governance and regular SME review. Questionnaire accuracy and safety require consistent benchmarking and operational oversight to remain trustworthy.

What compliance standards should AI-powered questionnaire tools align with?

Tools should align with frameworks like NIST AI RMF and ISO 42001, applying the same rigor as other compliance-critical workflows. Governance for AI in compliance must match leading standards to be defensible in audits.

What are common risks with over-trusting AI questionnaire solutions?

Over-reliance can produce costly false positives and compliance failures if you do not regularly validate AI performance. Operational trade-offs and false-positive costs escalate quickly when metrics are not closely monitored.

How can my organization ensure AI is continuously improving its questionnaire responses?

Frequent knowledge-base updates, SME feedback loops, and tracking AI acceptance rates are the core practices. Continuous improvement requires thoughtful knowledge ownership and intentional process design, not just better algorithms.

Recommended

- Compliance Admin: Streamline Security Questionnaire Workflow

- How AI-driven security questionnaires transform compliance

- How AI transforms compliance for security questionnaires

- How to streamline security questionnaires: 80% faster

- Streamline compliance: automation & custom solutions guide

- AI and consumer privacy: Compliance guide for mid-sized businesses - BizDev Strategy